The thermodynamics of computing

Information processing requires a lot of energy. Energy-saving computer systems could make computing more efficient, but the efficiency of these systems can't be increased indefinitely, as ETH physicists show.

As steam engines became increasingly widespread in the 19th century, the question soon arose as to how to optimise them. Thermodynamics, the physical theory that resulted from the study of these machines, proved to be an extremely fruitful approach; it is still a central concept in the optimisation of energy use in heat engines.

Heat is a critical factor

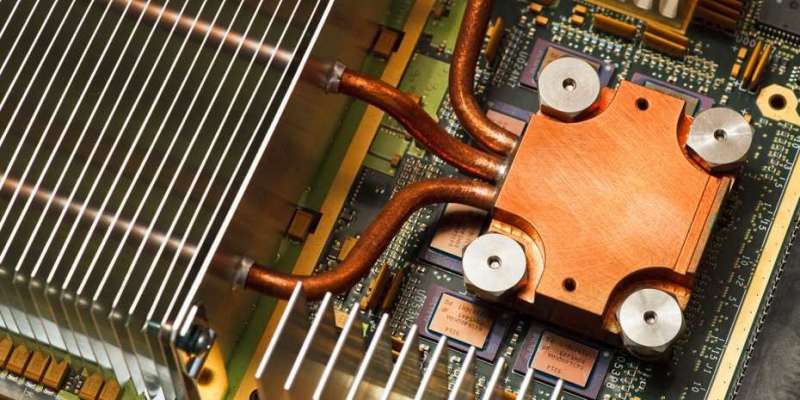

Even in today's information age, physicists and engineers hope to make use of this theory; it is becoming ever clearer that the clock rate or the number of chips used are not the limiting factors for a computer's performance, but rather its energy turnover. "The performance of a computing centre depends primarily on how much heat can be dissipated," says Renato Renner, Professor for Theoretical Physics and head of the research group for Quantum Information Theory.

Renner's statement can be illustrated by the Bitcoin boom: it is not computing capacity itself, but the exorbitant energy use – which produces a huge amount of heat – and the associated costs that have become the deciding factors for the future of the cryptocurrency. Computers' energy consumption has also become a significant cost driver in other areas.

For information processing, the question of completing computing operations as efficiently as possible in thermodynamic terms is becoming increasing urgent – or to put it another way: how can we conduct the greatest number of computing operations with the least amount of energy? As with steam engines, fridges and gas turbines, a fundamental principle is in question here: can the efficiency be increased indefinitely, or is there a physical limit that fundamentally cannot be exceeded?

Combining two theories

For ETH professor Renner, the answer is clear: there is such a limit. Together with his doctoral student Philippe Faist, who is now a postdoc at Caltech, he showed in a study soon to appear in Physical Review X that the efficiency of information processing cannot be increased indefinitely – and not only in computing centres used to calculate weather forecasts or process payments, but also in biology, for example when converting images in the brain or reproducing genetic information in cells. The two physicists also identified the deciding factors that determine the limit.

"Our work combines two theories that, at first glance, have nothing to do with one another: thermodynamics, which describes the conversion of heat in mechanical processes, and information theory, which is concerned with the principles of information processing," explains Renner.

The connection between the two theories is hinted at by a formal curiosity: information theory uses a mathematical term that formally resembles the definition of entropy in thermodynamics. This is why the term entropy is also used in information theory. Renner and Faist have now shown that this formal similarity goes deeper than would be assumed at first glance.

No fixed limits

Notably, the efficiency limit for the processing of information is not fixed, but can be influenced: the better you understand a system, the more precisely you can tailor the software to the chip design, and the more efficiently the information will be processed. That is exactly what is done today in high-performance computing. "In future, programmers will also have to take the thermodynamics of computing into account," says Renner. "The decisive factor is not minimising the number of computing operations, but implementing algorithms that use as little energy as possible."

Developers could also use biological systems as a benchmark here: "Various studies have shown that our muscles function very efficiently in thermodynamic terms," explains Renner. "It would now be interesting to know how well our brain performs in processing signals."

As close to the optimum as possible

As a quantum physicist, Renner's focus on this question is no coincidence: with quantum thermodynamics, a new research field has emerged in recent years that has particular relevance for the construction of quantum computers. "It is known that qubits, which will be used by future quantum computers to perform calculations, must work close to the thermodynamic optimum to delay decoherence," says Renner. "This phenomenon is a huge problem when constructing quantum computers, because it prevents quantum mechanical superposition states from being maintained long enough to be used for computing operations."

More information: Philippe Faist et al. Fundamental Work Cost of Quantum Processes, Physical Review X (2018). DOI: 10.1103/PhysRevX.8.021011

Journal information: Physical Review X

Provided by ETH Zurich