How to solve virtual reality's human perception problem

Virtual reality isn't confined to the entertainment world. There has also been an uptake of VR in more practical fields – it's been used to piece together parts of a car engine, or to allow people to "try on" the latest fashion trends from the comfort of their home. But the technology is still struggling to tackle a human perception problem.

It's clear that VR has some pretty cool applications. At the University of Bath we've applied VR to exercise; imagine going to the gym to take part in the Tour de France and race against the world's top cyclists.

But the technology doesn't always gel with human perception – the term used to describe how we take information from the world and build understanding from it. Our perception of reality is what we base our decisions on and mostly determines our sense of presence in an environment. Clearly, the design of an interactive system goes beyond the hardware and software; people must be factored in, too.

It's challenging to tackle the problem of designing VR systems that really transport humans to new worlds with an acceptable sense of presence. As VR experiences are becoming increasingly more complex, it becomes difficult to quantify the contribution each element of the experience makes to someone's perception inside a VR headset.

When watching a 360-degree film in VR, for example, how would we determine if the computer-generated imagery (CGI) contributes more or less to the movie's enjoyment than the 360-degree audio technology deployed in the experience? We need a method for studying VR in a reductionist manner, removing the clutter before adding each element piece by piece to observe the effects on a person's sense of presence.

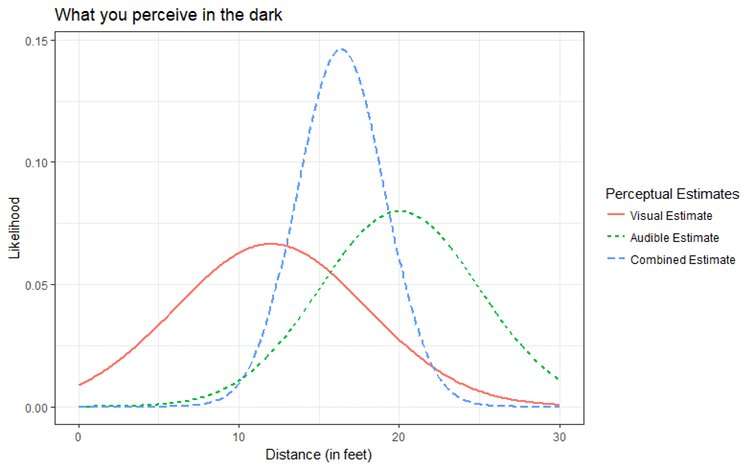

One theory blends together computer science and psychology. Maximum likelihood estimation explains how we combine the information we receive across all our senses, integrating it together to inform our understanding of the environment. In its simplest form, it states that we combine sensory information in an optimal fashion; each sense contributes an estimate of the environment but it is noisy.

Noisy signals

Imagine a person with good hearing walking at nighttime in a quiet country lane. They spot a murky shadow in the distance and hear the distinct sound of footsteps approaching them. But that person can't be sure about what it is they are seeing due to "noise" in the signal (it's dark). Instead, they rely on hearing, because the quiet surroundings mean that sound in this example is a more reliable signal.

This scenario is depicted in the image below, which shows how the estimates from human eyes and ears combine to give an optimal estimate somewhere in the middle.

This has many applications in VR. Our recent work at the University of Bath has applied this method to solving a problem with how people estimate distances when using virtual reality headsets. A driving simulator for teaching people how to drive could lead to them compressing distances in VR, rendering use of the tech inappropriate in such a learning environment where real world risk factors come into play.

Understanding how people integrate information from their senses is crucial to the long-term success of VR, because it isn't solely visual. Maximum likelihood estimation helps to model how effectively a VR system needs to render its multi-sensory environment. Better knowledge of human perception will lead to even more immersive VR experiences.

Put simply, it's not a matter of separating each signal from the noise; it's about taking all signals with the noise to give the most likely result for virtual reality to work for practical applications beyond the entertainment world.

Provided by The Conversation

This article was originally published on The Conversation. Read the original article.![]()