Using supercomputers to delve into the building blocks of matter

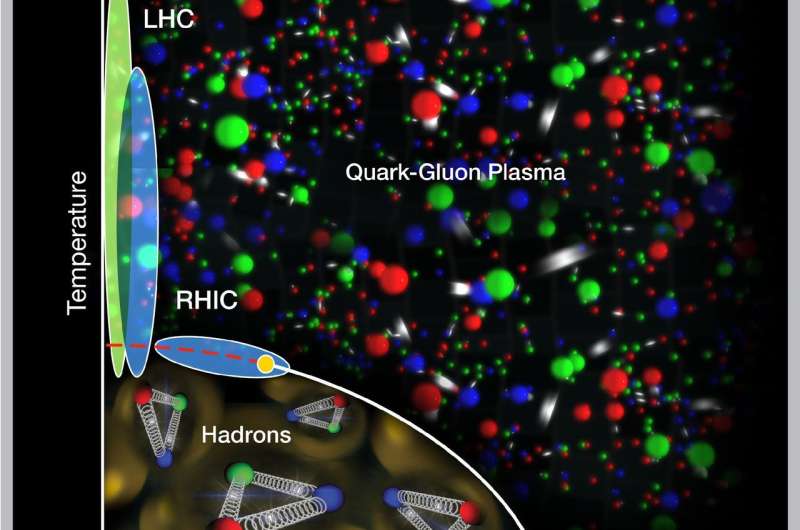

Nuclear physicists are known for their atom-smashing explorations of the building blocks of visible matter. At the Relativistic Heavy Ion Collider (RHIC), a particle collider at the U.S. Department of Energy's (DOE) Brookhaven National Laboratory, and the Large Hadron Collider (LHC) at Europe's CERN laboratory, they steer atomic nuclei into head-on collisions to learn about the subtle interactions of the quarks and gluons within.

To fully understand what happens in these particle smashups and how quarks and gluons form the structure of everything we see in the universe today, the scientists also need sophisticated computational tools—software and algorithms for tracking and analyzing the data and to perform the complex calculations that model what they expect to find.

Now, with funding from DOE's Office of Nuclear Physics and the Office of Advanced Scientific Computing Research in the Office of Science, nuclear physicists and computational scientists at Brookhaven Lab will help to develop the next generation of computational tools to push the field forward. Their software and workflow management systems will be designed to exploit the diverse and continually evolving architectures of DOE's Leadership Computing Facilities—some of the most powerful supercomputers and fastest data-sharing networks in the world. Brookhaven Lab will receive approximately $2.5 million over the next five years to support this effort to enable the nuclear physics research at RHIC (a DOE Office of Science User Facility) and the LHC.

The Brookhaven "hub" will be one of three funded by DOE's Scientific Discovery through Advanced Computing program for 2017 (also known as SciDAC4) under a proposal led by DOE's Thomas Jefferson National Accelerator Facility. The overall aim of these projects is to improve future calculations of Quantum Chromodynamics (QCD), the theory that describes quarks and gluons and their interactions.

"We cannot just do these calculations on a laptop," said nuclear theorist Swagato Mukherjee, who will lead the Brookhaven team. "We need supercomputers and special algorithms and techniques to make the calculations accessible in a reasonable timeframe."

Scientists carry out QCD calculations by representing the possible positions and interactions of quarks and gluons as points on an imaginary 4-D space-time lattice. Such "lattice QCD" calculations involve billions of variables. And the complexity of the calculations grows as the questions scientists seek to answer require simulations of quark and gluon interactions on smaller and smaller scales.

For example, a proposed upgraded experiment at RHIC known as sPHENIX aims to track the interactions of more massive quarks with the quark-gluon plasma created in heavy ion collisions. These studies will help scientists probe behavior of the liquid-like quark-gluon plasma at shorter length scales.

"If you want to probe things at shorter distance scales, you need to reduce the spacing between points on the lattice. But the overall lattice size is the same, so there are more points, more closely packed," Mukherjee said.

Similarly, when exploring the quark-gluon interactions in the densest part of the "phase diagram"—a map of how quarks and gluons exist under different conditions of temperature and pressure—scientists are looking for subtle changes that could indicate the existence of a "critical point," a sudden shift in the way the nuclear matter changes phases. RHIC physicists have a plan to conduct collisions at a range of energies—a beam energy scan—to search for this QCD critical point.

"To find a critical point, you need to probe for an increase in fluctuations, which requires more different configurations of quarks and gluons. That complexity makes the calculations orders of magnitude more difficult," Mukherjee said.

Fortunately, there's a new generation of supercomputers on the horizon, offering improvements in both speed and the way processing is done. But to make maximal use of those new capabilities, the software and other computational tools must also evolve.

"Our goal is to develop the tools and analysis methods to enable the next generation of supercomputers to help sort through and make sense of hot QCD data," Mukherjee said.

A key challenge will be developing tools that can be used across a range of new supercomputing architectures, which are also still under development.

"No one right now has an idea of how they will operate, but we know they will have very heterogeneous architectures," said Brookhaven physicist Sergey Panitkin. "So we need to develop systems to work on different kinds of supercomputers. We want to squeeze every ounce of performance out of the newest supercomputers, and we want to do it in a centralized place, with one input and seamless interaction for users," he said.

The effort will build on experience gained developing workflow management tools to feed high-energy physics data from the LHC's ATLAS experiment into pockets of unused time on DOE supercomputers. "This is a great example of synergy between high energy physics and nuclear physics to make things more efficient," Panitkin said.

A major focus will be to design tools that are "fault tolerant"—able to automatically reroute or resubmit jobs to whatever computing resources are available without the system users having to worry about making those requests. "The idea is to free physicists to think about physics," Panitkin said.

Mukherjee, Panitkin, and other members of the Brookhaven team will collaborate with scientists in Brookhaven's Computational Science Initiative and test their ideas on in-house supercomputing resources. The local machines share architectural characteristics with leadership class supercomputers, albeit at a smaller scale.

"Our small-scale systems are actually better for trying out our new tools," Mukherjee said. With trial and error, they'll then scale up what works for the radically different supercomputing architectures on the horizon.

The tools the Brookhaven team develops will ultimately benefit nuclear research facilities across the DOE complex, and potentially other fields of science as well.

Provided by US Department of Energy