Deep learning reconstructs holograms

Deep learning has been experiencing a true renaissance especially over the last decade, and it uses multi-layered artificial neural networks for automated analysis of data. Deep learning is one of the most exciting forms of machine learning that is behind several recent leapfrog advances in technology including for example real-time speech recognition and translation as well image/video labeling and captioning, among many others. Especially in image analysis, deep learning shows significant promise for automated search and labeling of features of interest, such as abnormal regions in a medical image.

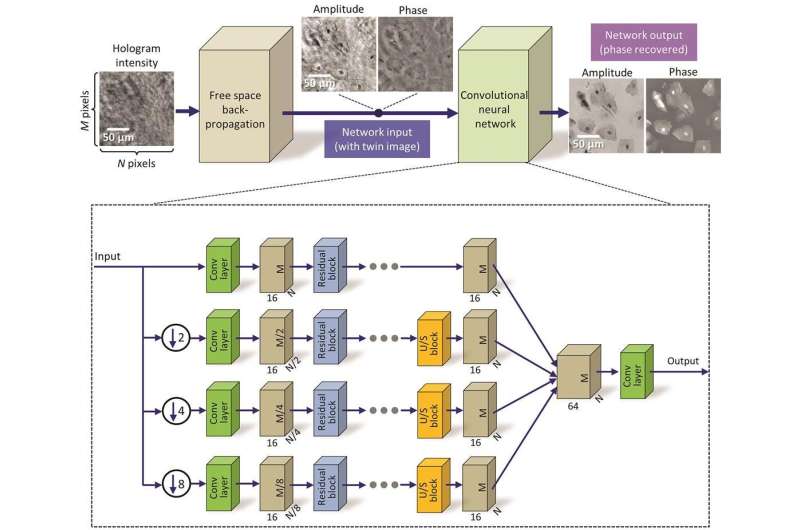

Now, UCLA researchers have demonstrated a new use for deep learning – this time to reconstruct a hologram and form a microscopic image of an object. In a recent article that is published in Light: Science & Applications, a journal of the Springer Nature, UCLA researchers have demonstrated that a neural network can learn to perform phase recovery and holographic image reconstruction after appropriate training. This deep learning-based approach provides a fundamentally new framework to conduct holographic imaging and compared to existing approaches it is significantly faster to compute and reconstructs improved images of the objects using a single hologram, such that it requires fewer measurements in addition to being computationally faster.

This research was led by Dr. Aydogan Ozcan, an associate director of the UCLA California NanoSystems Institute and the Chancellor's Professor of electrical and computer engineering at the UCLA Henry Samueli School of Engineering and Applied Science, along with Dr. Yair Rivenson, a postdoctoral scholar, and Yibo Zhang, a graduate student, both at the UCLA electrical and computer engineering department.

The authors validated this deep learning based approach by reconstructing holograms of various samples including blood and Pap smears (used for screening of cervical cancer) as well as thin sections of tissue samples used in pathology, all of which demonstrated successful elimination of spatial artifacts that arise from the lost phase information at the hologram recording process. Stated differently, after its training the neural network has learned to extract and separate the spatial features of the true image of the object from undesired light interference and related artifacts. Remarkably, this deep learning based hologram recovery has been achieved without any modeling of light-matter interaction or a solution of the wave equation. "This is an exciting achievement since traditional physics-based hologram reconstruction methods have been replaced by a deep learning based computational approach" said Rivenson.

"These results are broadly applicable to any phase recovery and holographic imaging problem, and this deep learning based framework opens up a myriad of opportunities to design fundamentally new coherent imaging systems, spanning different parts of the electromagnetic spectrum, including visible wavelengths as well as the X-ray regime" added Ozcan, who is also an HHMI Professor with the Howard Hughes Medical Institute.

Other members of the research team were Harun Günaydın and Da Teng, members of the Ozcan Research Lab at UCLA.

More information: Accepted Article Preview: innovate.ee.ucla.edu/wp-conten … CNN-LSA-2017-wSI.pdf

innovate.ee.ucla.edu/welcome.html

Ozcan's research is supported by a Presidential Early Career Award for Scientists and Engineers, the Army Research Office, the National Science Foundation, the Office of Naval Research, the National Institutes of Health, the Howard Hughes Medical Institute, the Vodafone Americas Foundation, the Mary Kay Foundation and the Steven and Alexandra Cohen Foundation.

Journal information: Light: Science & Applications

Provided by UCLA Ozcan Research Group