Neural nets model audience reactions to movies

Disney Research used deep learning methods to develop a new means of assessing complex audience reactions to movies via facial expressions and demonstrated that the new technique outperformed conventional methods.

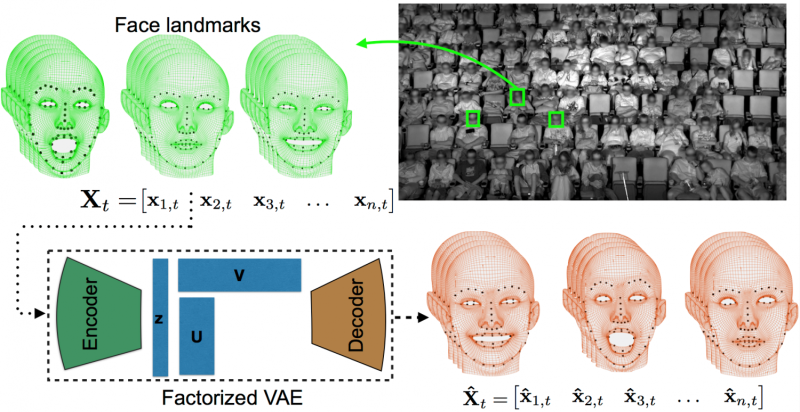

The new method, called factorized variational autoencoders or FVAEs demonstrated a surprising ability to reliably predict a viewer's facial expressions for the remainder of the movie after observing an audience member for only a few minutes. While the experimental results are still preliminary, this approach demonstrates tremendous promise to more accurately model group facial expressions in a wide range of applications.

"The FVAEs were able to learn concepts such as smiling and laughing on their own," said Zhiwei Deng, a Ph.D. student at Simon Fraser University who served as a lab associate at Disney Research. "What's more, they were able to show how these facial expressions correlated with humorous scenes."

The researchers will present their findings at the IEEE Conference on Computer Vision and Pattern Recognition on July 22 in Honolulu.

"We are all awash in data, so it is critical to find techniques that discover patterns automatically," said Markus Gross, vice president at Disney Research. "Our research shows that deep learning techniques, which use neural networks and have revolutionized the field of artificial intelligence, are effective at reducing data while capturing its hidden patterns."

The research team applied FVAEs to 150 showings of nine mainstream movies such as "Big Hero 6," "The Jungle Book" and "Star Wars: The Force Awakens." They used a 400-seat theater instrumented with four infrared cameras to monitor the faces of the audience. The result was a dataset of 3,179 audience members and 16 million facial landmarks to be evaluated.

"It's more data than a human is going to look through," said research scientist Peter Carr. "That's where computers come in - to summarize the data without losing important details."

Similar to recommendation systems for online shopping that suggest new products based on previous purchases, FVAEs look for audience members who exhibit similar facial expressions throughout the entire movie. FVAEs are then able to learn a set of stereotypical reactions from the entire audience. They can automatically learn the gamut of general facial expressions, like smiles, and determine how audience members "should" be reacting to a given movie based on strong correlations in reactions between audience members. These two features are mutually reinforcing and help FVAEs learn both more effectively than previous systems. It is this combination that allows FVAEs to predict a viewer's facial expression for an entire movie based on only a few minutes of observations, said research scientist Stephan Mandt.

The developed pattern recognition technique is not limited to faces. It can be used on any time series data collected from a group of objects. "Once a model is learned, we can generate artificial data that looks realistic," said Yisong Yue, an assistant professor of computing and mathematical sciences at the California Institute of Technology. For instance, if FVAEs were used to analyze a forest - noting differences in how trees respond to wind based on their type and size as well as wind speed - those models could be used to simulate a forest in animation.

In addition to Carr, Deng, Mandt and Yue, the research team included Rajitha Navarathna and Iain Matthews of Disney Research and Greg Mori of Simon Fraser University.

Combining creativity and innovation, this research continues Disney's rich legacy of leveraging technology to enhance the tools and systems of tomorrow.

More information: "Factorized Variational Autoencoders for Modeling Audience Reactions to Movies-Paper" [PDF, 6.92 MB]

Provided by Disney Research