Artificial intelligence creeps into daily life

Mark Zuckerberg envisions a software system inspired by the "Iron Man" character Jarvis as a virtual butler managing his household.

The Facebook founder's dream is about artificial intelligence, which is slowly but surely creeping into our daily lives, no longer just science fiction.

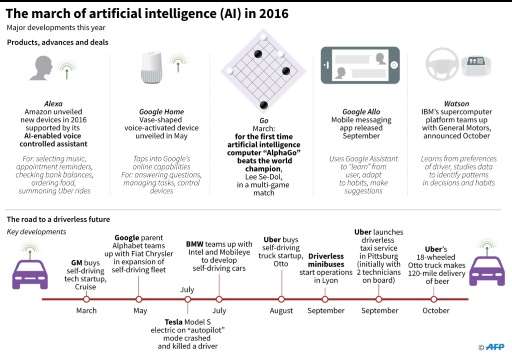

Artificial intelligence or AI is getting a foothold in people's homes, starting with the Amazon devices like its Echo speaker which links to a personal assistant "Alexa" to answer questions and control connected devices such as appliances or light bulbs.

Analyst Carolina Milanesi of the research firm Creative Strategies said that "2016 was the year about raising awareness, and exposing consumers to the idea of AI in a more mass market way."

Milanesi said it may take time for the technology to fulfill its potential, noting that companies need "a strong hook" to bring large numbers of consumers into this world.

Consumer Intelligence Research Partners estimates that Amazon has sold more than five million of its connected speakers such as Echo since 2014, in a market now heating up with competition from Google Home, and others likely in development.

Google meanwhile is also using its AI prowess to make smartphones smarter—its Allo messenger can, for example, suggest a meeting or deliver relevant information during a conversation. Among other tech giants Apple has been quietly ramping up the capabilities of its Siri digital assistant and Facebook its Messenger platform.

Driving the car

AI is also the key "driver" for autonomous vehicles, around which Google, Uber, automakers and others have expanded efforts in the past year.

And Amazon is seeking to put AI to work in the supermarket—testing a system without cash registers or lines, where consumers simply grab their products and go, and have a bill tallied by artificial intelligence.

Stanford University AI researcher Alexandre Alahi, said he sees a future "where intelligent machines are omnipresent in our daily lives."

"We will see robots in the home and (powering) self-driving cars, but also in railway stations, hospitals and elsewhere in cities," he said.

This could include delivery robots or devices to help mobility for blind people, he noted.

Safety, health, productivity

These technologies "will help improve our safety, our health, and our productivity," Alahi said.

A system of sensors for example, can monitor a hospital patient 24 hours a day, and may allow elderly people to remain at home with better medical surveillance.

These systems rely on powerful computers which can crunch, analyze and interpret data.

One example of this comes from IBM, whose Watson supercomputer systems are offering "cognitive health" programs which can analyze a person's genome and offer personalized treatment for cancer, for example.

Meanwhile Google recently announced it had developed an algorithm which can detect diabetic retinopathy, a cause of blindness, by analyzing retina images.

While Alahi said AI systems designed to recognize and interpret data from images "are close to human performance," more work needs to be done to improve "social intelligence," or understanding the subtleties of our everyday decisions.

A self-driving car, for example, can easily navigate around Google's home base in Mountain View, California, but may have more problems around the Arc de Triomphe in Paris, where driving behaviors are less predictable.

Alahi said robotics needs to understand the unwritten social behaviors used in daily life, which can vary from one culture to another.

A robot, for example, might cut through a group of people in a train station to find the most efficient path, unknowingly violating social rules on personal space.

"There are situations where technology is not yet capable of understanding human behavior," said Alahi, who is part of a research project using a robot, with the aim of understanding pedestrian behavior.

These kinds of robots may be technological marvels, but they also raise fears that they could get out of control, concerns heightened by movies like "Terminator."

"It's all scary, but this is going to take years to happen, and by the time it's done, we'll be ready for it," said Milanesi.

© 2016 AFP