Liquid silicon: Computer chips could bridge the gap between computation and storage

Computer chips in development at the University of Wisconsin-Madison could make future computers more efficient and powerful by combining tasks usually kept separate by design.

Jing Li, an assistant professor of electrical and computer engineering at UW-Madison, is creating computer chips that can be configured to perform complex calculations and store massive amounts of information within the same integrated unit—and communicate efficiently with other chips. She calls them "liquid silicon."

"Liquid means software and silicon means hardware. It is a collaborative software/hardware technique," says Li. "You can have a supercomputer in a box if you want. We want to target a lot of very interesting and data-intensive applications, including facial or voice recognition, natural language processing, and graph analytics."

The high-speed number-crunching of processors and the data warehousing of big storage memory in modern computers usually fall to two entirely different types of hardware.

"There's a huge bottleneck when classical computers need to move data between memory and processor," says Li. "We're building a unified hardware that can bridge the gap between computation and storage."

Processor and memory chips are typically separately produced by different manufacturing foundries, then assembled together by system engineers on printed circuit boards to make computers and smartphones. The separation means even simple operations, like searches, require multiple steps to accomplish: first fetching data from the memory, then sending that data all the way through the deep storage hierarchy to the processor core.

The chips Li is developing, by contrast, incorporate memory, computation and communication into the same device using a layered design called monolithic 3D integration: silicon and semiconductor circuitry on the bottom connected with solid-state memory arrays on the top using dense metal-to-metal links.

End users will be able to configure the devices to allocate more or fewer resources to memory or computation, depending on what types of applications a system needs to run.

"It can be dynamic and flexible," says Li. "We originally worried it might be too hard to use because there are too many options. But with proper optimization, anyone can take advantage of the rich flexibility offered by our hardware."

To help people harness the new chip's potential, Li's group also is developing software that translates popular programming languages into the chip's machine code, a process called compilation.

"If I just handed you something and said, 'This is a supercomputer in a box,' you might not be able to use it if the programming interface is too difficult," says Li. "You cannot imagine people programming in terms of binary zeroes and ones. It would be too painful."

Thanks to her compilation software, programmers will be able to port their applications directly onto the new type of hardware without changing their coding habits.

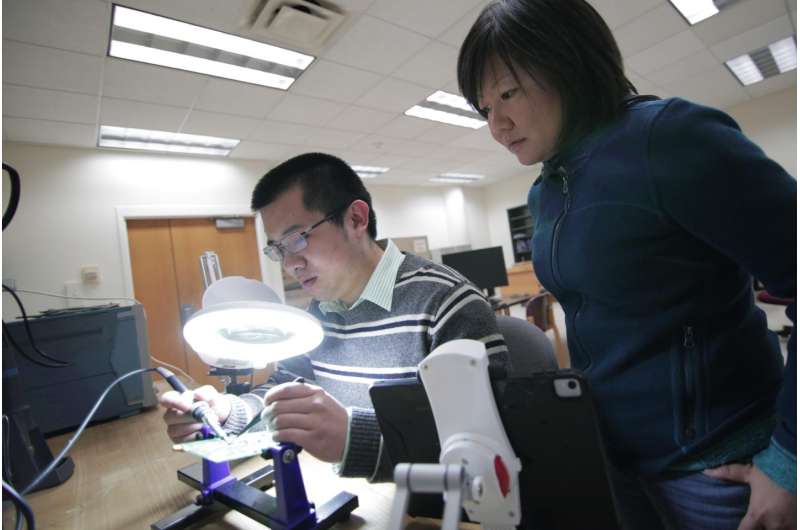

To evaluate the performance of prototype liquid silicon chips, Li and her students established an automated testing system they built from scratch. The platform can reveal reliability problems better than even the most advanced industry testing, and multiple companies have sent their chips to Li for evaluation.

Given that testing accounts for more than half the consumer cost of computer chips, having such advanced infrastructure at UW-Madison can help make liquid silicon chips a reality and facilitate future research.

"We can do all types of device-level, circuit-level and system-level testing with our platform," says Li. "Our industry partners told us that our testing system does the entire job of a test engineer automatically."

Li's work is supported by a Defense Advanced Research Projects Agency Young Faculty Award, a first for a computational researcher at UW-Madison. She is one of 25 recipients nationwide receiving as much as $500,000 for two years to fund research on topics ranging from gene therapy to machine learning.

Provided by University of Wisconsin-Madison