System converts stereoscopic 3-D video content for use in glasses-less 3-D displays

"Glasses-less" 3-D displays now commercially available dispense with the need for cumbersome glasses, but existing 3-D stereoscopic content will not work in these new devices, which project several views of a scene simultaneously. To solve this problem, Disney Research and ETH Zurich have developed a system that can transform stereoscopic content into multiview content in real-time.

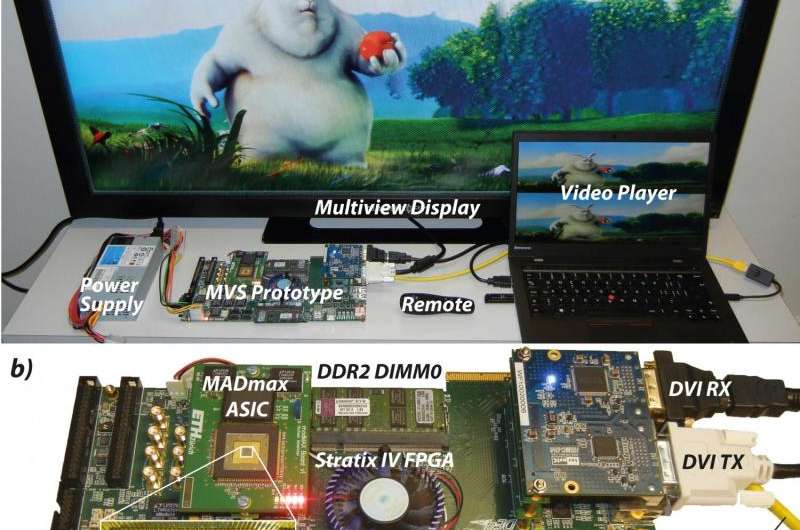

In a research paper to be presented at the IEEE International Conference on Visual Communications and Image Processing, Dec. 13-16, in Singapore, Michael Schaffner and his colleagues describe the combination of computer algorithms and hardware that can convert existing stereoscopic 3-D content so that it can be used in the glasses-less displays, called multiview autostereoscopic displays, or MADs.

Not only does the process operate automatically and in real-time, but the hardware could be integrated into a system-on-chip (SoC), making it suitable for mobile applications.

"The full potential of this new 3-D technology won't be achieved simply by eliminating the need for glasses," said Markus Gross, vice president of research at Disney Research. "We also need content, which is largely nonexistent in this new format and often impractical to transmit, even when it does exist. It's critical that the systems necessary for generating that content be so efficient and so mobile that they can be used in any device, anywhere."

MADs enable a 3-D experience by simultaneously projecting several views of a scene, rather than just the two views of conventional, stereoscopic 3-D content. Researchers therefore have begun to develop a number of multiview synthesis (MVS) methods to bridge this gap. One approach has been depth image-based rendering, or DIBR, which uses the original views to build a depth map that describes the distance of each pixel to the scene. But building depth maps is difficult and less-than-perfect depth maps can result in poor quality images.

Schaffner, a Ph.D. student at Disney Research and ETH Zurich's Integrated Systems Laboratory, said the Disney team took a different approach based on image domain warping, or IDW. This method dispenses with problematic depth maps. Instead, the left and right input images are analyzed to reveal features such as saliency information, point correspondences and edges. By solving an optimization problem, so-called image warps are then computed which transform the input images to new viewing positions between the two original views.

The researchers were able to use this method to synthesize eight new views in real-time and in high definition.

The IDW method is computationally intensive, Schaffner said, but the hardware architecture the team developed is efficient enough to enable fully automatic multiview synthesis.

"To our knowledge, this is the first hardware implementation of a complete IDW-based MVS system capable of full HD-quality view synthesis with eight views in real-time," Schaffner said. "The hardware could be integrated as an energy-efficient co-processor within a SoC, thereby paving the way toward mobile MVS in real-time."

More information: "Automatic Multiview Synthesis – Towards a Mobile System on a Chip-Paper" [PDF, 3.32 MB]

Provided by Disney Research