Visual authoring tool helps non-experts build their own digital story worlds

Creating characters and situations that computers can use to generate stories for video games is a task that normally requires expert knowledge, but Disney Research is developing a new interface that can help more people build these digital story worlds.

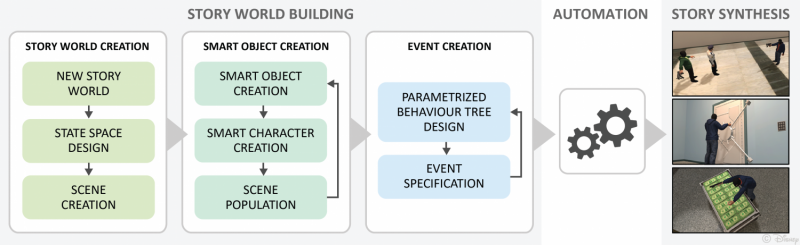

The graphical interface guides users through the process of creating a story world, helping them populate the domain with "smart" characters and objects, determine their relationships and how they interact with each other and define events that can drive compelling narratives. Designing story worlds is an important first step towards creating and experiencing compelling digital narratives.

The research team, which includes researchers from Rutgers University and ETH Zurich, presented preliminary findings of their ongoing research at INT8 2015, the 8th Workshop on Intelligent Narrative Technologies.

"We experience a shift from simple, linear stories into complex, participatory story worlds that are told across different media types," said Markus Gross, vice president of research at Disney Research. "As the boundaries between content consumers and content creators continue to blur, we want to democratize story world creation and expand the pool of authors by making it possible for both experts and novice users to construct a space for compelling narrative content."

To enable a computer program to reason, infer and ultimately generate stories, people must first provide the computer with a wealth of domain knowledge - information on places, characters and objects and how they relate to one another, as well as how they interact. However, according to Steven Poulakos, a post-doctoral researcher at Disney Research, the languages and interfaces used for specifying this domain knowledge are highly specialized, making them difficult to use by anyone who lacks equally specialized knowledge.

"Our story world builder is designed to build up components of a full story world with the semantics required for automatic story generation," Poulakos said.

"Our long-term mission is to empower anyone to create their own digital stories by providing easy-to-use, intuitive visual authoring interfaces," said Mubbasir Kapadia, an assistant professor in the Computer Science Department at Rutgers University. "We believe this new tool is a significant step toward that goal, though much work remains ahead of us."

The graphical interface leads the user through three main steps. The first step is story world creation, in which the user configures the scene and establishes all of the possible states and relationships. In a bank robbery scenario that the researchers used to demonstrate their system, this step included such specifications as a bank vault being locked, or a character designated as a robber, or relationships defined, such as two characters being allies.

In the next step, users author "smart characters" and "smart objects" by defining how characters and objects interact with each other. For example, a robber is able to grab an object or press a button that might open a vault door.

In the final step, event creation, the user designs Parameterized Behavior Trees, which provide a graphical, hierarchical representation for complex, multi-character interactions. One such behavior tree for the researchers' bank robbery scenario was a "distract and incapacitate" event; such an event could be used to incapacitate a bank guard, but it could also be re-used with different characters or situations.

More information: "Towards an Accessible Interface for Story World Building-Paper" [PDF, 8.19 MB]

Provided by Disney Research