July 8, 2015 feature

'Straintronic spin neuron' may greatly improve neural computing

(Phys.org)—Researchers have proposed a new type of artificial neuron called a "straintronic spin neuron" that could serve as the basic unit of artificial neural networks—systems modeled on human brains that have the ability to compute, learn, and adapt. Compared to previous designs, the new artificial neuron is potentially orders of magnitude more energy-efficient, more robust against thermal degradation, and fires at a faster rate.

The researchers, Ayan K. Biswas, Professor Jayasimha Atulasimha, and Professor Supriyo Bandyopadhyay at Virginia Commonwealth University in Richmond, have published a paper on the straintronic spin neuron in a recent issue of Nanotechnology.

As the scientists explain, finding an effective way to mimic real neurons is essential for realizing the full potential of artificial neural networks, yet this task has proven difficult.

"Most computers are digital in nature and process information using Boolean logic," Bandyopadhyay told Phys.org. "However, there are certain computational tasks that are better suited for 'neuromorphic computing,' which is based on how the human brain perceives and processes information. This inspired the field of artificial neural networks, which made great progress in the last century but was ultimately stymied by a hardware impasse. The electronics used to implement artificial neurons and synapses employ transistors and operational amplifiers, which dissipate enormous amounts of energy in the form of heat and consume large amounts of space on a chip. These drawbacks make thermal management on the chip extremely difficult and neuromorphic computing less attractive than it should be.

"Fortunately, there are other ways to implement neurons, such as with magnetic devices. It was thought that magnetic devices will dissipate much less heat, but what we found is that they do not necessarily dissipate less heat in all circumstances. The heat dissipation depends on how the magnetic devices are switched to mimic a neuron's operation. If they are switched with current, which is the usual approach, then they do not dissipate that much less heat, and, in some circumstances, may even dissipate more heat than transistors.

"However, there is a way to switch certain types of magnets with mechanical strain generated by an electrical voltage. We found that if magnets are switched with that approach, then the magnetic neurons are indeed much less dissipative than both their transistor-based counterparts and current-switched magnetic counterparts. This is the 'straintronic spin neuron,' and it may provide a boost to neuromorphic information processing hardware."

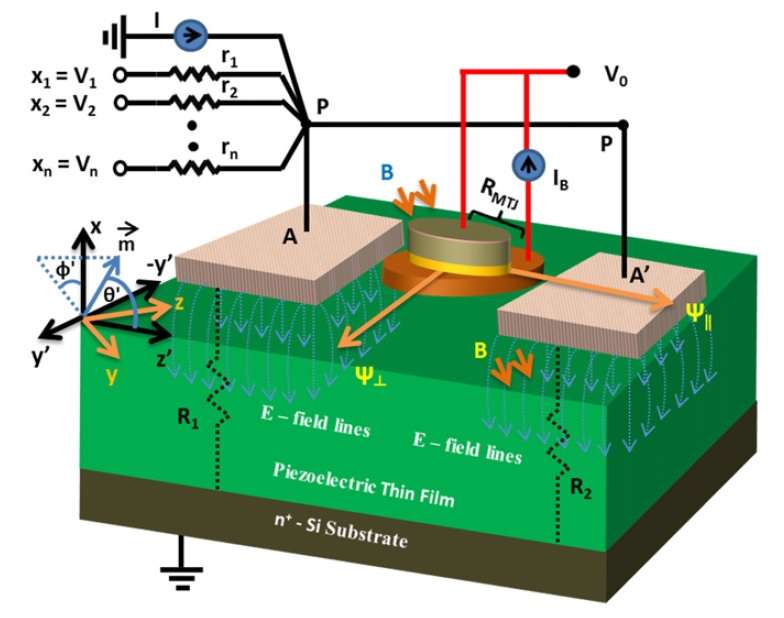

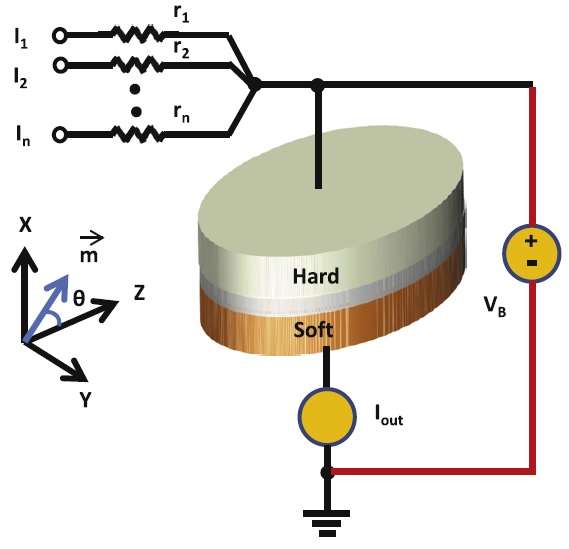

As the researchers explain, the proposed straintronic spin neuron is based on a magneto-tunneling junction, which is a tri-layered structure consisting of a hard nanomagnet, a spacer layer, and a soft magnetostrictive nanomagnet sitting on top of a piezoelectric film. Applying voltage pulses to the neuron generates a strain in the piezoelectric film, which is partially transferred to the soft magnetostrictive nanomagnet. When the strain in the nanomagnet exceeds a threshold value, the magnetization rotates abruptly, which changes the resistance of the magneto-tunneling junction between two stable states. The abrupt change in voltage across the device mimics neuron firing.

"The extraordinary energy efficiency of the straintronic spin neuron is due to the fact that it takes very little voltage to switch the magnetization of a soft magnetostrictive nanomagnet elastically coupled to a piezoelectric film—a system known as a 'two-phase multiferroic'—as long as the magnetostrictive nanomagnet is made of a special class of materials that have very high magnetostriction, such as Terfenol-D," the researchers explained.

In addition to being more energy-efficient, the straintronic spin neuron is also much more resilient to thermal noise than current-driven spin neurons. At temperatures above 0 K, thermal noise creates an additional random torque on the magnetization of any nanomagnet, which increases the probability that the neuron will either fire before reaching the threshold voltage or fail to fire after reaching the threshold voltage.

This deleterious effect can be combatted by increasing the threshold current for firing (in the case of current-driven spin neurons) or the threshold voltage for firing (in the case of voltage-driven straintronic spin neurons), but this will also increase the energy dissipation. Here, the researchers showed that the tradeoff between energy efficiency and reliability favors the straintronic spin neuron overwhelmingly over current-driven spin neurons, which are estimated to dissipate several orders of magnitude more energy.

With these advantages, straintronic spin neurons could have a variety of applications in neural computing.

"What we have studied is a perceptron, which is a mathematical model of the artificial neuron," Atulasimha said. "There are many possible applications of this in neural computing. One area we are interested in is spike-timing-dependent plasticity, which is a form of Hebbian learning. It is widely believed that it underlies learning and information storage in the brain, and there is a vast body of literature dealing with this. Straintronic spin neurons are fired by voltage impulses, and there are clear pathways to adapt them to the spike-timing-dependent plasticity model. We are also interested in character recognition, which employs feed-forward networks and image compression. That does not exclude anything else. Wherever heat dissipation is a spoiler, the straintronic spin neuron may be able to offer a solution."

The next steps for the researchers will involve fabricating the physical devices.

"The proof of the pudding is always in the eating," Biswas said. "Sooner or later, this device will have to be demonstrated experimentally. Our group has experimentally demonstrated switching of a magnet's magnetization with strain in many different systems and we will strive to demonstrate the straintronic spin neuron in the future."

More information: Ayan K. Biswas, et al. "The straintronic spin-neuron." Nanotechnology. DOI: 10.1088/0957-4484/26/28/285201

Journal information: Nanotechnology

© 2015 Phys.org