January 9, 2014 weblog

Budapest team studies how humans interpret dog barks

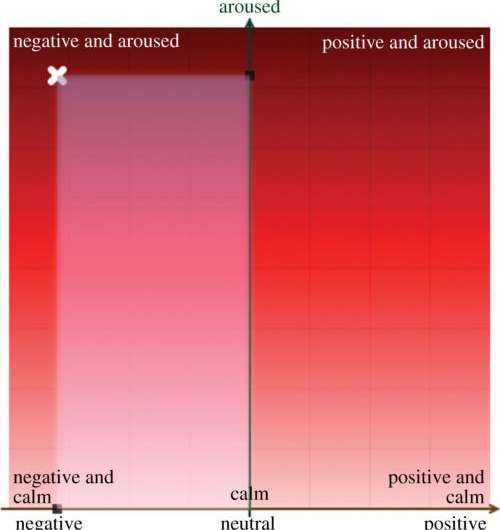

(Phys.org) —People interpret their dogs' sounds by how long or short is the bark and also by how high or low the pitch. Moreover, humans rely on the same rules to assess emotional valence and intensity in conspecific and dog vocalizations. These are the findings of six researchers from Eotvos Lorand University, and the Hungarian Academy of Sciences in Budapest. Tamás Faragó, Attila Andics, Viktor Devecseri, Anna Kis, Márta Gácsi and Ádám Miklósi said shorter barks sound more positive and high-pitched noises seem more intense to humans. If those measures sound familiar, that is because people use the same rules to work out how their dog is feeling as they do to determine the emotional state of other humans. "Our findings demonstrate that humans rate conspecific emotional vocalizations along basic acoustic rules, and that they apply similar rules when processing dog vocal expressions."

The team devised an online survey to assess how humans perceive the emotional content of both human and dog vocalizations. Thirty-nine people were recruited to take the survey. They were Hungarian volunteers, six males and 33 females, recruited via the Internet and through personal requests. They listened to nonverbal sounds. As for the vocal repertoire of the dog, they chose vocalizations collected from various contexts and representing various vocalization types from a dog sound database The human nonverbal vocalizations were collected from available databases used in earlier studies to assess emotional expressions. These included, respiratory sounds such as a cough, and nonsense babbling. Shorter calls from both humans and dogs were taken as more emotionally positive than were longer calls. Also, samples that were of higher pitch were rated as more emotionally intense than lower-pitched sounds for humans and dogs.

In further detail, the authors were able to show results that, regarding dog vocalizations, the "long, high-pitched and tonal sounds can be linked to fearful inner states (high intensity, negative valence), long, low-pitched, noisy sounds to aggressiveness (lower intensity, still negative valence) and short, pulsing sounds independent of their pitch and tonality are connected to positive inner states."

The team's report appeared online earlier this week in the peer-reviewed Royal Society journal, Biology Letters.

"Here, for the first time, we directly compared the emotional valence and intensity perception of dog and human non-verbal vocalizations. We revealed similar relationships between acoustic features and emotional valence and intensity ratings of human and dog vocalizations: those with shorter call lengths were rated as more positive, whereas those with a higher pitch were rated as more intense."

The authors said the study is "the first to directly compare how humans perceive human and dog emotional vocalizations." They also said that their results provide "the first evidence of the use of the same basic acoustic rules in humans for the assessment of emotional valence and intensity in both human and dog vocalizations. Further comparative studies using vocalizations from a wide variety of species may reveal the existence of a common mammalian basis for emotion communication, as suggested by our results."

.

Among other research paths, the Eotvos Lorand University is known for its Family Dog Project, established in 1994 as the first research group dedicated to investigate the evolutionary and ethological foundations of the dog-human relationship. The project's researchers explore both human and dog behavior and the bond between people and dogs.

In a discussion of the dog on the project web page, the observation is made that "Since human environment is challenging for dogs by virtue of its complex social and cognitive nature, dogs had to develop human-compatible social behavior traits including functional analogues of human communicational skills."

More information: Humans rely on the same rules to assess emotional valence and intensity in conspecific and dog vocalizations, Biology Letters, Published 8 January 2014 DOI: 10.1098/rsbl.2013.0926

Abstract

Humans excel at assessing conspecific emotional valence and intensity, based solely on non-verbal vocal bursts that are also common in other mammals. It is not known, however, whether human listeners rely on similar acoustic cues to assess emotional content in conspecific and heterospecific vocalizations, and which acoustical parameters affect their performance. Here, for the first time, we directly compared the emotional valence and intensity perception of dog and human non-verbal vocalizations. We revealed similar relationships between acoustic features and emotional valence and intensity ratings of human and dog vocalizations: those with shorter call lengths were rated as more positive, whereas those with a higher pitch were rated as more intense. Our findings demonstrate that humans rate conspecific emotional vocalizations along basic acoustic rules, and that they apply similar rules when processing dog vocal expressions. This suggests that humans may utilize similar mental mechanisms for recognizing human and heterospecific vocal emotions.

Journal information: Biology Letters

© 2014 Phys.org