Algorithm searches for models that best explain experimental data

A Franklin University professor recently developed an evolutionary computation approach that offers researchers the flexibility to search for models that can best explain experimental data derived from many types of applications, including economics.

To test the algorithm underlying that approach, Esmail Bonakdarian, Ph.D., an assistant professor of Computing Sciences and Mathematics at Franklin, leveraged the Glenn IBM 1350 Opteron cluster, the flagship system of the Ohio Supercomputer Center (OSC).

“Every day researchers are confronted by large sets of survey or experimental data and faced with the challenge of ‘making sense’ of this collection and turning it into useful knowledge,” Bonakdarian said. “This data usually consists of a series of observations over a number of dimensions, and the objective is to establish a relationship between the variable of interest and other variables, for purposes of prediction or exploration.”

Bonakdarian employed his evolutionary computation approach to analyze data from two well-known, classical “public goods” problems from economics: When goods are provided to a larger community without required individual contributions, it often results in “free-riding.” However, people also tend to show a willingness to cooperate and sacrifice for the good of the group.

“While OSC resources are more often used to make discoveries in fields such as physics, chemistry or the biosciences, or to solve complex industrial and manufacturing challenges, it is always fascinating to see how our research clients employ our supercomputers to address issues in broader fields of interest, such as we find in Dr. Bonakdarian’s work in economics and evolutionary computing,” said Ashok Krishnamurthy, interim co-executive director of the center.

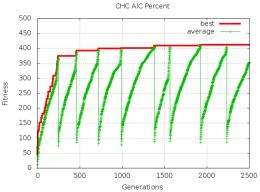

“Evolutionary algorithms are inherently suitable for parallel or distributed execution,” Bonakdarian said. “Given the right platform, this would allow for the simultaneous evaluation of many candidate solutions, i.e., models, in parallel, greatly speeding up the work.”

Regression analysis has been the traditional tool for finding and establishing statistically significant relationships in research projects, such as for the economics examples Bonakdarian chose. As long as the number of independent variables is relatively small, or the experimenter has a fairly clear idea of the possible underlying relationship, it is feasible to derive the best model using standard software packages and methodologies.

However, Bonakdarian cautioned that if the number of independent variables is large, and there is no intuitive sense about the possible relationship between these variables and the dependent variable, “the experimenter may have to go on an automated ‘fishing expedition’ to discover the important and relevant independent variables.”

As an alternative, Bonakdarian suggests using an evolutionary algorithm as a way to “evolve” the best minimal subset with the largest explanatory value.

“This approach offers more flexibility as the user can specify the exact search criteria on which to optimize the model,” he said. “The user can then examine a ranking of the top models found by the system. In addition to these measures, the algorithm can also be tuned to limit the number of variables in the final model. We believe that this ability to direct the search provides flexibility to the analyst and results in models that provide additional insights.”

More information: cs.franklin.edu/~esmail/Papers … EM11_Bonakdarian.pdf

Provided by Ohio Supercomputer Center