Pushing black-hole mergers to the extreme: RIT scientists achieve 100:1 mass ratio

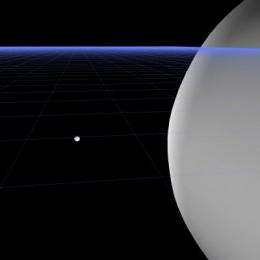

Scientists have simulated, for the first time, the merger of two black holes of vastly different sizes, with one mass 100 times larger than the other. This extreme mass ratio of 100:1 breaks a barrier in the fields of numerical relativity and gravitational wave astronomy.

Until now, the problem of simulating the merger of binary black holes with extreme size differences had remained an unexplored region of black-hole physics.

"Nature doesn't collide black holes of equal masses," says Carlos Lousto, associate professor of mathematical sciences at Rochester Institute of Technology and a member of the Center for Computational Relativity and Gravitation. "They have mass ratios of 1:3, 1:10, 1:100 or even 1:1 million. This puts us in a better situation for simulating realistic astrophysical scenarios and for predicting what observers should see and for telling them what to look for.

"Leaders in the field believed solving the 100:1 mass ratio problem would take five to 10 more years and significant advances in computational power. It was thought to be technically impossible."

"These simulations were made possible by advances both in the scaling and performance of relativity computer codes on thousands of processors, and advances in our understanding of how gauge conditions can be modified to self-adapt to the vastly different scales in the problem," adds Yosef Zlochower, assistant professor of mathematical sciences and a member of the center.

A paper announcing Lousto and Zlochower's findings was submitted for publication in Physical Review Letters.

The only prior simulation describing an extreme merger of black holes focused on a scenario involving a 1:10 mass ratio. Those techniques could not be expanded to a bigger scale, Lousto explained. To handle the larger mass ratios, he and Zlochower developed numerical and analytical techniques based on the moving puncture approach—a breakthrough, created with Manuela Campanelli, director of the Center for Computational Relativity and Gravitation, that led to one of the first simulations of black holes on supercomputers in 2005.

The flexible techniques Lousto and Zlochower advanced for this scenario also translate to spinning binary black holes and for cases involving smaller mass ratios. These methods give the scientists ways to explore mass ratio limits and for modeling observational effects.

Lousto and Zlochower used resources at the Texas Advanced Computer Center, home to the Ranger supercomputer, to process the massive computations. The computer, which has 70,000 processors, took nearly three months to complete the simulation describing the most extreme-mass-ratio merger of black holes to date.

"Their work is pushing the limit of what we can do today," Campanelli says. "Now we have the tools to deal with a new system."

Simulations like Lousto and Zlochower's will help observational astronomers detect mergers of black holes with large size differentials using the future Advanced LIGO (Laser Interferometer Gravitational-wave Observatory) and the space probe LISA (Laser Interferometer Space Antenna). Simulations of black-hole mergers provide blueprints or templates for observational scientists attempting to discern signatures of massive collisions. Observing and measuring gravitational waves created when black holes coalesce could confirm a key prediction of Einstein's general theory of relativity.

Provided by Rochester Institute of Technology