August 21, 2008 feature

Robots Detect Behavioral Cues to Follow Humans

Robots can be ironic. Even though they might not have emotions of their own, they can still detect and respond to humans’ emotions. A recent study has shown that, by picking up on human emotional traits, as well as a variety of other conscious and unconscious behavioral cues, robots may be able to act more naturally and accurately with humans.

The researchers, from the University of California, Davis, have developed a system that allows follower robots to use behavioral cues from human leaders and other robots in order to track and follow them. The ability to follow will likely be essential as robots continue to work alongside people more and more, such as in office buildings, hospitals, and airports.

“As humans, we constantly incorporate other peoples' current actions as clues (cues) as to what they may do in the future,” Sanjay Joshi of the University of California, Davis, told PhysOrg.com. “For instance, we have a ‘sixth sense’ on the highway to know that a certain car will swerve into our lane soon, based on the driver's current driving patterns. Then, we may become more defensive in our own driving. In our work, we wanted to begin the process of allowing robots to use behavioral cues (of humans or other robots), to make the robot's mission more reliable and accurate. In social work environments populated by numerous people and robots, these types of cues should be abundant.”

In their robot-following system, the researchers integrated information provided by behavioral cues to improve the performance of robot followers along with other tracking methods, such as cameras. The system continuously estimates the future predicted position of the leader as it moves, and then directs the follower robot to the predicted position.

The researchers’ aim was to reduce the amount of instructions or technical expertise required from human leaders to robots. As the authors noted, robots may be accepted if they are helpful, but can easily be rejected if they are difficult to work with.

The researchers explained that behavioral cues that robots might use could include any action or signal that the leader exhibits that hints at a future action. These might be intended behaviors, such as pointing or waving. Other cues might be unconscious, such as behaviors that indicate stress or sadness, since they may indicate generally quick or slow movement patterns. Also, studies on human walking have shown that people unconsciously turn their head up to 25 degrees about 200 milliseconds before turning.

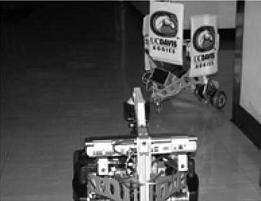

In experiments, the researchers tested how well a follower robot (Evolution Robotics’ Scorpion) could follow a leader robot (another Scorpion) as it zig-zagged and turned a corner of a hallway. Turning was the more difficult action to follow, since the leader robot escaped the sight of the follower robot. Without using behavioral cues, the follower robot would initiate a searching algorithm by turning and looking around. If the leader wasn’t too far away, the follower could detect it and continue following; otherwise, it would be lost and stop moving.

The addition of the behavioral-cue controller significantly helped the follower robot to keep track of the leader. By detecting the leader’s subtle behaviors, the follower could anticipate when the leader was about to turn and predict its future path. Even though it lost sight of the leader, it kept close enough to its path so that it could find the leader again after the “blind” turn.

Overall, the behavioral-cue model had advantages in cases where the leader robot made drastic turns that would otherwise leave the follower robot lost. But since other controllers also had advantages, the researchers suggest that a supervisory control system that coordinates multiple controllers could be useful. They also anticipate that a wide range of behavioral cues should lead to highly successful robot followers.

“In the future, we hope to explore relevant behavioral cues for other robot tasks in human-robot work environments, and work on the robotics and computer science tools needed to make effective use of those cues,” Joshi said.

More information: Chueh, Michael; Au Yeung, Yi Lin William; Lei, Kim-Pang Calvin; and Joshi, Sanjay S. “Following Controller for Autonomous Mobile Robots Using Behavioral Cues.” IEEE Transactions on Industrial Electronics, Vol. 55, No. 8, August 2008.

Copyright 2008 PhysOrg.com.

All rights reserved. This material may not be published, broadcast, rewritten or redistributed in whole or part without the express written permission of PhysOrg.com.