E-science effort will try to tame data torrents

A growing number of scientific fields suffer from a stifling embarassment of riches: data pile up much faster than they can be analyzed. A team of researchers at the University of Southern California's Information Sciences Institute is now building a prototype of a system that will address the problem by automating scientific workflows.

ISI computer scientist Yolanda Gil leads the newly funded $13.8 million Windward project, aiming at (in its full title) "Scaleable Knowledge Discovery through Grid Workflows."

Gil says that in fields like climatology, high energy physics and seismic modeling “our ability to gather data is surpassing our ability to analyze it. Our data warehouses are becoming data graveyards.”

In a sense, Windward will bring to the analysis of scientific problems an approach similar to that of industrial engineering, where engineers create optimal workflows to bring together raw material and machinery in the most efficient fashion to create product.

The product in modern science is not a physical item like an automobile or computer, but rather a model, or an understanding. But efficient workflows to create it are equally critical — and, because the raw material is information, not matter, much more automatable.

Gil and ISI collaborator Ewa Deelman co-chaired an NSF workshop on the subject in May 2006.

"Significant scientific advances today are achieved through complex distributed scientific computations," their overview for this workshop noted. "These computations, often represented as workflows of executable jobs and their associated dataflow may be composed of thousands of steps that integrate diverse models and data sources.”

The workshop held out the possibility of computer science being able to channel this waterfall of data into orchestrated workflows, leading to recommendations for "basic work in computer science to create a science of workflows," and suggested that scientists proactively build workflow architecture into their research plans: "workflow representations that capture scientific analysis at all levels should become the norm when complex distributed scientific computations are carried out."

Windward is an effort by Gil, who is principal Investigator and Project Leader of the ISI Interactive Knowledge Capture research group, Deelman, and two fellow ISI project leaders, Paul Cohen and Carl Kesselman.

They believe they can accomplish this ambitious task by integrating two longtime ISI specialties, artificial intelligence and grid computing.

AI tries to give computers power to respond accurately and appropriately to changing and novel circumstances, bringing multiple concerns to bear on the problem of making the right choice from a number of alternatives.

Cohen will build on his work at the ISI Center for Research in Unexpected Events (CRUE), which has focused on AI systems for complex data analysis. Cohen has been working specifically in the area of AI analysis of scientific data for years, publishing papers on "Intelligent Assistance for Computational Scientists: Integrated Modeling, Experimentation, and Analysis" ten years ago with work on planning systems going even farther back.

He has also studied the history of science in certain field to try to see patterns in the process of discovery, work that underlies the approach.

In order for AI systems to automate processes and provide assistance to scientists in defining workflows of complex computations, they need to have the world carefully structured and described.

Gil has long been active in developing the semantic web, which creates a digital universe that AI can explore and understand, and which will be a building block of the Windward system.

Previous AI systems have been much, much smaller than the regional, national and even intercontinental data structures needed to do workflow science.

This is where grid computing, and Deelman and Kesselman come in. Since 1996, Kesselman has been perfecting the Globus software that allows multiple users in multiple locations secure and easy and transparent access not just to raw data, but also to resources (computers) to process the data.

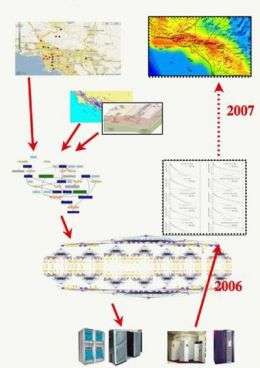

Linking to grid computing software, Deelman and her collaborators have developed a workflow management system, called Pegasus, that maps large numbers of computations to distributed resources while optimizing the overall performance of the application.

Deelman will continue evolve Pegasus, which has already been successfully used in applications in the fields of astronomy, earthquake science, gravitational-wave physics, and others.

The AI and grid computing groups at ISI have been collaborating in the area of scientific workflows for several years now, with notable results in earthquake science in joint work with the Southern California Earthquake Center.

In the Windward project, they will develop new workflow techniques to represent complex algorithms and their subtle differences so that they can be automatically selected and configured to satisfy the stated application requirements.

They will also investigate mechanisms to support autonomous and robust execution of concurrent workflows over continuously changing data.

In addition, they will develop learning techniques to improve the performance of the workflow system by exploiting an episodic memory of prior workflow executions.

Source: University of Southern California