IBM Seeks to Build the Computer of the Future Based on Insights from the Brain

(PhysOrg.com) -- In an unprecedented undertaking, IBM Research and five leading universities are partnering to create computing systems that are expected to simulate and emulate the brain’s abilities for sensation, perception, action, interaction and cognition while rivaling its low power consumption and compact size.

The digital data explosion shows no signs of slowing down -- according to analyst firm IDC, the amount of digital data is growing at a mind-boggling 60 percent each year, giving businesses access to incredible new streams of information. But without the ability to monitor, analyze and react to this information in real-time, the majority of its value may be lost. Until the data is captured and analyzed, decisions or actions may be delayed. Cognitive computing offers the promise of systems that can integrate and analyze vast amounts of data from many sources in the blink of an eye, allowing businesses or individuals to make rapid decisions in time to have a significant impact.

For example, bankers must make split-second decisions based on constantly changing data that flows at an ever-dizzying rate. And in the business of monitoring the world’s water supply, a network of sensors and actuators constantly records and reports metrics such as temperature, pressure, wave height, acoustics and ocean tide. In either case, making sense of all that input would be a Herculean task for one person, or even for 100. A cognitive computer, acting as a “global brain,” could quickly and accurately put together the disparate pieces of this complex puzzle and help people make good decisions rapidly.

By seeking inspiration from the structure, dynamics, function, and behavior of the brain, the IBM-led cognitive computing research team aims to break the conventional programmable machine paradigm. Ultimately, the team hopes to rival the brain’s low power consumption and small size by using nanoscale devices for synapses and neurons. This technology stands to bring about entirely new computing architectures and programming paradigms. The end goal: ubiquitously deployed

computers imbued with a new intelligence that can integrate information from a variety of sensors and sources, deal with ambiguity, respond in a context-dependent way, learn over time and carry out pattern recognition to solve difficult problems based on perception, action and cognition in complex, real-world environments.

IBM and its collaborators have been awarded $4.9 million in funding from the Defense Advanced Research Projects Agency (DARPA) for the first phase of DARPA’s Systems of Neuromorphic Adaptive Plastic Scalable Electronics (SyNAPSE) initiative. IBM’s proposal, “Cognitive Computing via Synaptronics and Supercomputing (C2S2),” outlines groundbreaking research over the next nine months in areas including synaptronics, material science, neuromorphic circuitry, supercomputing simulations and virtual environments. Initial research will focus on demonstrating nanoscale, low power synapse-like devices and on uncovering the functional microcircuits of the brain. The long-term mission of C2S2 is to demonstrate low-power, compact cognitive computers that approach mammalian-scale intelligence.

“Exploratory research is in the fabric of IBM’s DNA,” said Josephine Cheng, IBM Fellow and vice president of IBM’s Almaden Research Center in San Jose. “We believe that our cognitive computing initiative will help shape the future of computing in a significant way, bringing to bear new technologies that we haven’t even begun to imagine. The initiative underscores IBM’s capabilities in bold, exploratory research and interest in powerful collaborations to understand the way the world works.”

IBM has assembled a multi-dimensional, integrated world-class team of researchers and collaborators led by Dr. Dharmendra Modha, manager of IBM’s cognitive computing initiative, to take on the challenge including Stanford University (Professors Kwabena Boahen, H. Phillip Wong, Brian Wandell), University of Wisconsin-Madison (Professor Gulio Tononi), Cornell University (Professor Rajit Manohar), Columbia University Medical Center (Professor Stefano Fusi) and University of California- Merced (Professor Christopher Kello). IBM Researchers include Dr. Stuart Parkin, Dr. Chung Lam, Dr. Bulent Kurdi, Dr. J. Campbell Scott, Dr. Paul Maglio, Dr. Simone Raoux, Dr. Rajagopal Ananthanarayanan, Dr. Raghav Singh, and Dr. Bipin Rajendran.

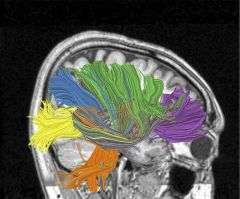

Recently, the IBM cognitive computing team demonstrated the near-real-time simulation at a scale of a small mammal brain using cognitive computing algorithms with the power of IBM’s BlueGene supercomputer. With this simulation capability, the researchers are experimenting with various mathematical hypotheses of brain function and structure as they work toward discovering the brain’s core computational micro and macro circuits.

In the past, the field of artificial intelligence research has focused on individual aspects of engineering intelligent machines. Cognitive computing, on the cutting edge of this line of research, seeks to engineer holistic intelligent machines that neatly tie together all of the pieces. IBM’s cognitive computing initiative was born out its 2006 Almaden Institute, which annually brings together top minds to address fundamental challenges at the very edge of science and technology. IBM has a rich history in the area of artificial intelligence research going all the way back to 1956 when IBM performed the world’s first large-scale (512 neuron) cortical simulation.

Provided by IBM