March 10, 2013 report

InSight team's wearable glass system identifies people by clothes

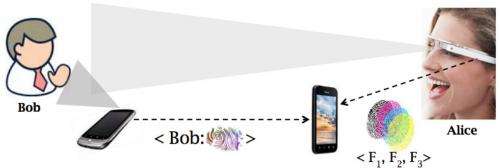

(Phys.org) —Researchers from the University of South Carolina and Duke are proposing a "visual fingerprint" app that can be used with smartphones and wearable camera displays such as Google Glass. Their paper, "Recognizing Humans without Face Recognition," explored techniques that can jointly leverage camera-enabled glasses, an offering that is still in the wings, and phones, to pick out any individual based on what the person is wearing. The team behind the InSight project developed and tested a prototype system that can pick out people by their clothes and other accessories. Once identified, the person's name would be displayed on the headset.

The system was developed by Srihari Nelakuditi, associate professor of computer science and engineering at the University of South Carolina, along with three colleagues at Duke University, He Wang, Xuan Bao, and Romit Roy Choudhury.

How InSight works: A smartphone app creates a person's "fingerprint" by taking a series of pictures of the person. The app creates a file called a spatiogram that records the colors and patterns of the person's clothing. The camera display uses the combination to identify the person. (The researchers define spatiograms as color histograms with spatial distributions encoded. Basic color histograms only capture the relative frequency of each color, but spatiograms capture how these colors are distributed in 2-D space.)

What's the point of the app? One advantage would be if a person wanted to more easily find friends in crowded places such as airports and arenas, where people become separated from each other or have their backs turned so that faces cannot easily be seen.

The researchers suggest another use case where "Alice" may look at people around her in a social gathering and see the names of each individual like a virtual badge suitably overlaid on her wearable display.

What's the limitation? Once the individual changes clothes or accessories, InSight identification is impossible. The app would be useful for a day, for example, or part of the day.

To develop their prototype, the researchers used PivotHead camera-enabled glasses and Samsung Galaxy phones running Android. They conducted experiments with 15 users. They asked participants to actively use their smartphones. InSight could identify people 93 per cent of the time, even when they had their backs turned. The researchers' key intent in this exercise was to pursue a hypothesis that colors and patterns on clothes may pose as a human "fingerprint." Their investigation suggested that clothes can be matched with reasonable accuracy. Their work is ongoing, and will focus on incremental deployment, they said, as well as exploring motion patterns when "visual fingerprints" are not unique. "We believe there is promise, and are committed to building a fuller, real-time, system."

More information: InSight: Recognizing Humans without Face Recognition, by He Wang et al.: synrg.ee.duke.edu/papers/insight-final.pdf

© 2013 Phys.org