May 25, 2012 report

Computers excel at identifying smiles of frustration (w/ Video)

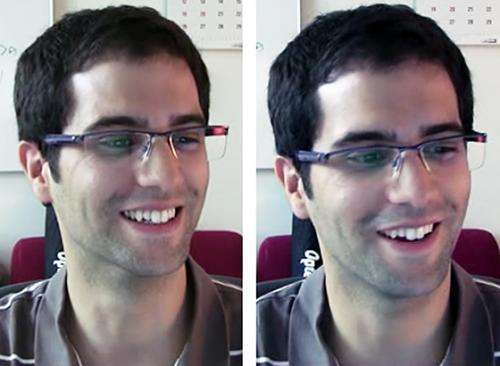

(Phys.org) -- Researchers at the Massachusetts Institute of Technology (MIT) in the US have trained computers to recognize smiles, and they have turned out to be more adept at recognizing smiles of frustration than humans.

The scientists, led by M. Ehsan Hoque, used a webcam to film subjects as they filled out a long form which was automatically deleted when the subjects pressed Submit. Most (90%) of the subjects smiled with the frustration, but when asked to assume a frustrated expression the same percentage did not smile.

The researchers also studied “happiness” smiles, produced when subjects were shown cute videos of babies, and they were also asked to adopt a happy smile without an emotional prompt. The volunteers were also asked to interpret images of people either genuinely smiling or faking a smile of delight or frustration.

The researchers, from the MIT Media Lab, developed an algorithm to track the movements made by muscle groups producing various facial expressions and quantified the facial features using the Facial Action Coding System (FACS).

FACS was developed in the 1970s and has become the most widely used system in facial recognition applications and for people needing to understand facial behaviours. To use FACS, researchers decompose the expression into “Action Units,” and they may also record any asymmetry, as well as the intensity and duration of the expression.

Smiles that are easily identified as insincere or “fake” are made using only the zygomatic major muscles, which are voluntary muscles that lift the corners of the mouth. In genuine smiles (known as Duchenne smiles) the corners of the mouth rise, but involuntary muscles also raise the cheeks and produce small wrinkles around the eyes. The study found that genuine frustrated and happy smiles differ primarily in their timing, with frustrated smiles appearing more quickly than smiles of delight.

The computer algorithm was able to identify smiles of frustration over 90 percent of the time, while human subjects performed below chance. Hoque said the computer’s better performance is probably because it focuses solely on the mechanical features of the smile and breaks them down into action units, while humans rely on other cues such as how quickly the smile is produced to determine the emotion behind it. The study also showed that most do not expect a smile in response to frustration.

The findings, published in IEEE Transactions on Affective Computing, might be useful in training children with autism to identify the different kinds of smiles, and they might also find application in programming computers to respond to the mood of their users.

Journal information: IEEE Transactions on Affective Computing

© 2012 Phys.Org