March 14, 2012 feature

Software automatically transforms movie clips into comic strips

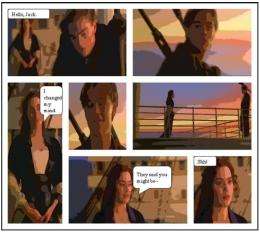

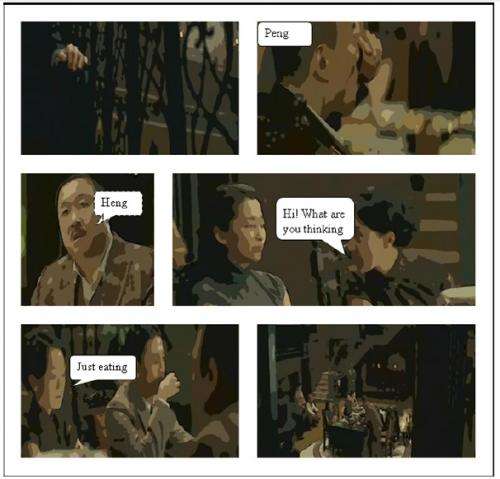

(PhysOrg.com) -- While some comics today are still drawn by hand, many modern cartoonists use a variety of digital tools to create comics. But even with the help of these tools, creating comics is a time-consuming task that requires many human hours of work. In a new study, a team of researchers has designed a program that can automatically transform movie scenes into comic strips, without the need for any human intervention.

Meng Wang, a professor at the Hefei University of Technology, China, and coauthors have published their study on the new software, which they call “Movie2Comics,” in a recent issue of IEEE Transactions on Multimedia.

“The software can be useful for both professionals and hobbyists,” Wang told PhysOrg.com. “Professionals can directly use the software to generate comics (or integrate their interaction to achieve more impressive results); hobbyists may have interests to try it to see what will be generated from different movie clips.”

As the researchers explain in their study, previous programs have been developed to assist cartoonists in converting movies into comics, but the new method is the first fully automated approach. Without the need for any manual intervention, the method has the potential to significantly cut the time and expense associated with creating comics.

The new cartoonization process involves several steps, including an automatic script-face mapping algorithm that identifies the speaking character in scenes with multiple characters, automatic generation of comic panels of different sizes, positioning word balloons, and rendering movie frames in a cartoon style.

The researchers used the new method to transform 15 movie clips into comic strips. The clips came from three movies – “Titanic,” “Sherlock Holmes,” and “The Message” – and varied in length from 2 to 7 minutes. Although the method performed the transformation well for the most part, it sometimes put word bubbles next to the faces of incorrect characters. The script-face mapping algorithm had an accuracy of 85%, which the researchers hope to improve.

The researchers then conducted a user evaluation to see how well users understood and enjoyed the comics. They found that users had a slightly lower content comprehension of the comics compared with the original movie clips. Although some loss in comprehension is inevitable when converting to a different format, the script-face mapping errors also played a role. As for enjoyment, the user evaluations showed that enjoyment was highest when the comics included word balloons and effective layout and stylization.

Although the technique is capable of performing all steps automatically, the researchers noted that involving some human effort could lead to even better results. In such a scenario, the software would provide recommendations for each step of the transformation process, and humans could manually adjust the results much more quickly and efficiently than in pure manual methods. But the researchers also hope to improve the accuracy of the automated method.

“We have two future plans,” Wang said. “One, improve the performance of each component, such as script-face mapping, and hope we can generate perfect clips without user interaction. Two, integrate speech recognition technology to generalize the software, such that we can generate comics without movie scripts (currently we need the script file of the movie clip, but by using speech recognition we can automatically transform speech to texts).”

More information: Meng Wang, et al. “Movie2Comics: Towards a Lively Video Content Presentation.” IEEE Transactions on Multimedia. DOI: 10.1109/TMM.2012.2187181

Copyright 2012 PhysOrg.com.

All rights reserved. This material may not be published, broadcast, rewritten or redistributed in whole or part without the express written permission of PhysOrg.com.