Pittsburgh symposium answers: What is Watson?

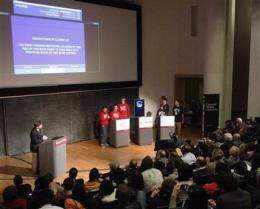

(AP) -- Six university students attempted to match wits with IBM's "Jeopardy!"-playing computer Wednesday and lost badly in a mock game show. But the competition was hardly the point of a daylong symposium meant to answer an appropriate question: What is Watson?

Watson is IBM's stab at advancing artificial intelligence by creating a machine that can recognize words using complex mathematical formulas. The algorithms can deduce probable characteristics and meanings of words based on the context in which they are used and other clues.

"Language is difficult for computers because they're not human," David Ferrucci, IBM's lead Watson researcher told a packed auditorium at Carnegie Mellon University before the demonstration.

"People have this model of how computers work and often it's the model of 'looking something up,'" Ferrucci said. What Watson's creators didn't do - and couldn't do - was anticipate all of the possible answers-and-questions the machine might encounter in a game show.

As such, Watson isn't simply loaded with oodles of trivia that the computer sorts through at light speed. Instead, Watson looks at "Jeopardy!" answers and formulates correct questions based on contextual clues, including the category. It even has formulas that can recognize "pun relationships" between words, Ferrucci said.

When Watson comes up with an answer, the computer is not spitting out information but rather offering a percentage-based opinion that the words in its "Jeopardy!" question are "correct." That may be what makes Watson most valuable if and when the technology is applied to other problem-solving fields including law, business, computer science and engineering.

Watson's creators believe its probability-based approach also holds promise as a medical diagnostic tool, and they've already signed a deal to do real world tests at Columbia University Medical Center and the University of Maryland School of Medicine.

The stakes there are high and that's another reason the computer was designed to play "Jeopardy!" - a highly competitive game in which Watson is rewarded for right answers and punished for wrong ones.

The computer also can track how much money it has won compared with its human opponents. Watson will sometimes pass on offering a question if it determines the amount of money it could lose isn't worth the computer's level of uncertainty about being correct.

That didn't prove to be much of a problem on Wednesday. The computer has won 71 percent of 55 games it has played against "Jeopardy!" TV champions, and made mincemeat out of three-member teams from Carnegie Mellon and the neighboring University of Pittsburgh.

Watson racked up $52,199 in pretend cash by providing questions to answers in categories including "International Food," "European Bodies of Water" and "Its Reigning Men" (which provided answers to questions about historical monarchs).

Pitt scored a distant second with $12,937 and Carnegie Mellon with $7,463.

Will Zhang, 20, a computer science major who headed Carnegie Mellon's team, was blown away by Watson's speed. The junior from Carmel, Ind., expressed frustration when the mock game show host asked the human contestants, "You guys feeling OK over there?" as Watson raced out to a large lead.

"Not cool!," Zhang replied, laughing.

"It was pretty difficult. I knew I had absolutely no chance against Watson," Zhang said afterward, before expressing admiration for Watson and the language issue its creators are trying to solve.

"I think it's really amazing to be able to work on a problem that nobody's really tackled before," Zhang said.

©2010 The Associated Press. All rights reserved. This material may not be published, broadcast, rewritten or redistributed.