San Francisco prosecutors turn to AI to reduce racial bias

In a first-of-its kind experiment, San Francisco prosecutors are turning to artificial intelligence to reduce racial bias in the courts, adopting a system that strips certain identifying details from police reports and leaves only key facts to govern charging decisions.

District Attorney George Gascon announced Wednesday that his office will begin using the technology in July to "take race out of the equation" when deciding whether to accuse suspects of a crime.

Criminal-justice experts say they have never heard of any project like it, and they applauded the idea as a creative, bold effort to make charging practices more colorblind.

Gascon's office worked with data scientists and engineers at the Stanford Computational Policy Lab to develop a system that takes electronic police reports and automatically removes a suspect's name, race and hair and eye colors. The names of witnesses and police officers will also be removed, along with specific neighborhoods or districts that could indicate the race of those involved.

"The criminal-justice system has had a horrible impact on people of color in this country, especially African Americans, for generations," Gascon said in an interview ahead of the announcement. "If all prosecutors took race out of the picture when making charging decisions, we would probably be in a much better place as a nation than we are today."

Gascon said his goal was to develop a model that could be used elsewhere, and the technology will be offered free to other prosecutors across the country.

"I really commend them, it's a brave move," said Lucy Lang, a former New York City prosecutor and executive director of the Institute for Innovation in Prosecution at John Jay College of Criminal Justice.

The technology relies on humans to collect the initial facts, which can still be influenced by racial bias. Prosecutors will make an initial charging decision based on the redacted police report. Then they will look at the entire report, with details restored, to see if there are any extenuating reasons to reconsider the first decision, Gascon said.

Lang and other experts said they look forward to seeing the results and that they expect the system to be a work in progress.

-

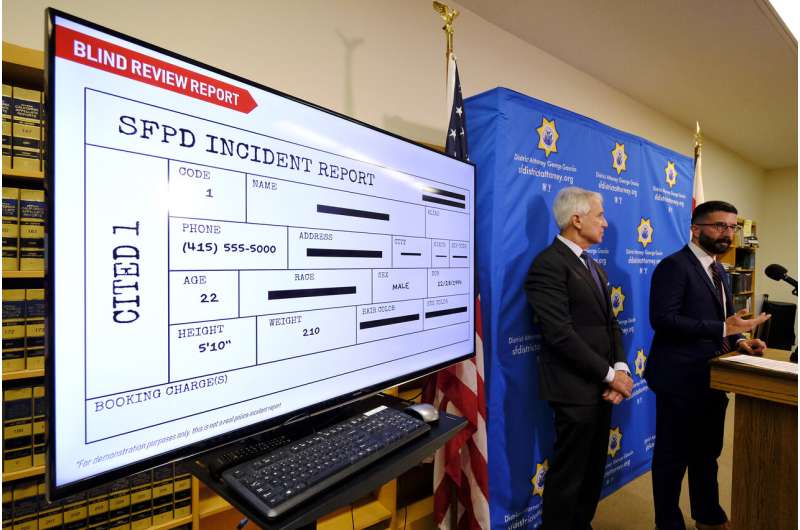

San Francisco District Attorney George Gascon, left, announces the implementation of an artificial intelligence tool to remove potential for bias in charging decisions as Alex Chohlas-Wood, Deputy Director, Stanford Computational Policy Lab, listens during a news conference Wednesday, June 12, 2019, in San Francisco. In a first-of-its kind experiment, San Francisco prosecutors are turning to artificial intelligence to reduce bias in the criminal courts. (AP Photo/Eric Risberg) -

San Francisco District Attorney George Gascon, left, announces the implementation of an artificial intelligence tool to remove potential for bias in charging decisions as Alex Chohlas-Wood, Deputy Director, Stanford Computational Policy Lab, listens during a news conference Wednesday, June 12, 2019, in San Francisco. In a first-of-its kind experiment, San Francisco prosecutors are turning to artificial intelligence to reduce bias in the criminal courts. (AP Photo/Eric Risberg) -

In this Tuesday, Feb. 16, 2016 file photo, San Francisco District Attorney George Gascon is shown at a news conference in San Francisco, On Wednesday, June 12, 2019, Gascon is announcing what appears to be a first of its kind program using artificial intelligence to lessen bias in the criminal justice system. Starting next month, prosecutors will decide whether to charge a suspect based on "color blind" police reports in which names, race and other identifying details have been removed. (AP Photo/Jeff Chiu)

"Hats off for trying new stuff," said Phillip Atiba Goff, president for the Center for Policing Equity. "There are so many contextual factors that might indicate race and ethnicity that it's hard to imagine how even a human could take that all out."

A 2017 study commissioned by the San Francisco district attorney found "substantial racial and ethnic disparities in criminal justice outcomes." African Americans represented only 6% of the county's population but accounted for 41% of arrests between 2008 and 2014.

The study found "little evidence of overt bias against any one race or ethnic group" among prosecutors who process criminal offenses. But Gascon said he wanted to find a way to help eliminate an implicit bias that could be triggered by a suspect's race, an ethnic-sounding name or a crime-ridden neighborhood where they were arrested.

After it begins, the program will be reviewed weekly, said Maria Mckee, the DA's director of analytics and research.

The move comes after San Francisco last month became the first U.S. city to ban the use of facial recognition by police and other city agencies. The decision reflected a growing backlash against AI technology as cities seek to regulate surveillance by municipal agencies.

© 2019 The Associated Press. All rights reserved.