July 4, 2013 weblog

Indiana University student offers Harlan programming language for GPUs

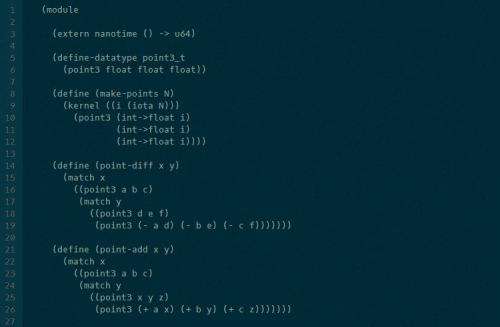

(Phys.org) —A doctoral candidate in computer science has come up with a programming language, Harlan, that can leverage the computing power of a GPU. His contribution may turn a corner in working with GPU applications, He just released a programming language called Harlan. It's all new and it's totally dedicated to building applications that run GPUs. The Harlan creator is Eric Holk of Indiana University. As a doctoral candidate, his interests, he said, focus on designing and implementing programming languages that can ease up the production of reliable software that performs well. Easier professed than done, some may argue, when it comes to involvement with GPUs.

Tech sites reviewing his achievement say he has taken on quite a challenge. Programming for GPUs, as ExtremeTech put it, calls for a type of programmer who is willing to spend "a lot of brain cycles dealing with low-level details which distract from the main purpose of the code." Holk stuck to it, attempting to answer his own question: What if a language could be built up from scratch, designed from the start to support GPU programming? Harlan is special in that it can take care of the "grunt work" of GPU programming.

A few key points about Harlan: (1) It can be compiled to OpenCL and can make use of the higher-level languages, Python and Ruby. (2) Syntax is based on Scheme, which is based on Lisp. Actually, when you start talking about Scheme, you become more immersed in Schemes of things. The Petite Chez Scheme is available for download. Chez Scheme is an implementation of Scheme based on an incremental optimizing compiler that produces code quickly. Petite Chez Scheme is a Scheme system compatible with Chez Scheme but uses a fast interpreter in place of the compiler. It was conceived as a runtime environment for compiled Chez Scheme applications, but can also be used as a standalone Scheme system. (3) Harlan runs on Mac OS X 10.6 (Snow Leopard), Mac OS X 10.7 (Lion), Mac OS X 10.8 (Mountain Lion), and "various flavors" of Linux. The github definition of Harlan calls it "a declarative, domain specific language for programming GPUs." According to the site, OpenCL implementations that should work.include the Intel OpenCL SDK, NVIDIA CUDA Toolkit, AMD Accelerated Parallel Processing (APP) SDK.

Holk announced Harlan in his blog as now available to the public as the result of about two years of work "Harlan," he stated, "aims to push the expressiveness of languages available for the GPU further than has been done before." He made note of its native support for rich data structures, including trees and ragged arrays.

More information:

github.com/eholk/harlan

blog.theincredibleholk.org/blo … e-release-of-harlan/

© 2013 Phys.org