May 29, 2008 feature

Intelligent Computers See Your Human Traits

Today’s computers can do a lot as far as computation goes, but they tend to do it in an impersonal, stand-offish way, so to speak. However, computer engineers are busy changing that, as they try to give computers a bit of a personal touch to make human-computer interaction more natural and friendly.

For instance, two studies from a recent issue of IEEE Transactions on Multimedia have investigated enabling computers to recognize users’ emotional states and ages. The researchers hope that tomorrow’s computers will be able to “look” at a human face and extract this type of information, much like humans do with each other.

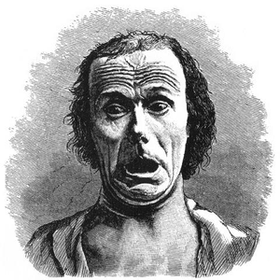

Emotional Detection

By combining audio and visual data, Yongjin Wang from the University of Toronto and Ling Guan from Ryerson University in Toronto have developed a system that recognizes six human emotional states: happiness, sadness, anger, fear, surprise, and disgust. Their system can recognize emotions in people from different cultures and who speak different languages with a success rate of 82%.

“Human-centered computing focuses on understanding humans, including recognition of face, emotions, gestures, speech, body movements, etc.,” Wang told PhysOrg.com. “Emotion recognition systems help the computer to understand the affective state of the user, and hence the computer can respond accordingly based on that perception.”

The researchers’ system extracted a large number of vocal characteristics, such as “prosodic features,” which include the rhythm, intensity, rate, and frequency of speech. Facial features were extracted holistically. Then, the researchers trained the system on several short video samples of individuals showing different emotions, from which it connected certain features with emotions.

As Wang and Guan explained, emotional representation is very diverse: some vocal and facial features may play an important role in characterizing certain emotions, but a very minimal role in other emotions. As a general example, happiness is detected better using certain visual features (e.g. in smiling), while anger is detected better using audio features (e.g. in yelling). The researchers found that there is not even one single feature that plays a significant role in all six emotions. While this finding shows that there are no sharp boundaries between emotions, it also makes it difficult for computers to distinguish emotions.

To approach this challenge, Wang and Guan used a step-by-step method, which added one feature at a time and then removed one at a time to find the most relevant features for identifying the unknown emotion. Then they used a multi-classifier scheme as a “divide and conquer” approach to separate possible emotions based on combinations of facial and vocal features.

“The most difficult part of enabling a computer to detect human emotion is the vast variance and diversity of vocal and facial expressions due to factors such as language, culture, and individual personality, etc.,” Wang explained. “Also, as shown in our paper, there are no sharp boundaries between different emotions. The accurate identification of discriminate patterns is a challenging problem.”

The researchers suggest that a computer that can recognize human emotion could one day have applications in customer service, computer games, security/surveillance, and educational software.

Age Estimation

While most people are pretty good at telling if someone is happy or upset, determining another person’s age just by looking at them is often an even bigger challenge, at least for humans. Computer engineers Yun Fu and Thomas Huang from the University of Illinois have trained a computer system to estimate a person’s age based on their facial features.

“Human age is one of the most important attributes that can infer individual condition, anthropometric information and social background,” Fu said. “The human-centered computing systems may concern more information from people in social groups than conventional computing systems, by focusing on the ways people organize and improve their lives around computational technologies.”

Fu and Huang worked with a database of facial images from 1600 subjects, half male and half female. Using results from previous age estimation systems, as well as a new aging characteristic they identified, the researchers trained a computer system to estimate human ages from 0 to 93 years. Their most successful algorithm could estimate a person’s age – based only on a few training images – to within five years or less 50% of the time, and within 10 years or less 83% of the time.

The researchers predict that age-recognition algorithms could have uses in preventing young kids access to adult Web sites, stopping vending machines from selling alcohol to underage individuals, and determining the age demographic of people who spend more time viewing certain advertisements.

By integrating human factors into computing, researchers will continue to bridge the gap between technology and people – and no doubt produce many more futuristic applications.

More information:

Wang, Yongjin, and Guan, Ling. “Recognizing Human Emotional State From Audiovisual Signals.” IEEE Transactions on Multimedia, Vol. 10, No. 4, June 2008, pp 659-668.

Fu, Yun, and Huang, Thomas S. “Human Age Estimation With Regression on Discriminative Aging Manifold.” IEEE Transactions on Multimedia, Vol. 10, No. 4, June 2008, pp 578-583.

Copyright 2008 PhysOrg.com.

All rights reserved. This material may not be published, broadcast, rewritten or redistributed in whole or part without the express written permission of PhysOrg.com.