Supercomputer simulation of universe may help in search for missing matter

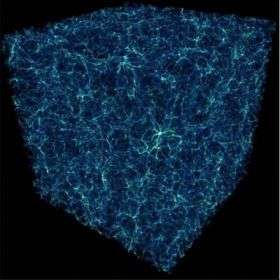

Much of the gaseous mass of the universe is bound up in a tangled web of cosmic filaments that stretch for hundreds of millions of light-years, according to a new supercomputer study by a team led by the University of Colorado at Boulder.

The study indicated a significant portion of the gas is in the filaments -- which connect galaxy clusters -- hidden from direct observation in enormous gas clouds in intergalactic space known as the Warm-Hot Intergalactic Medium, or WHIM, said CU-Boulder Professor Jack Burns of the astrophysical and planetary sciences department. The team performed one of the largest cosmological supercomputer simulations ever, cramming 2.5 percent of the visible universe inside a computer to model a region more than 1.5 billion light-years across. One light-year is equal to about six trillion miles.

A paper on the subject will be published in the Dec. 10 issue of the Astrophysical Journal. In addition to Burns, the paper was authored by CU-Boulder Research Associate Eric Hallman of APS, Brian O'Shea of Los Alamos National Laboratory, Michael Norman and Rick Wagner of the University of California, San Diego and Robert Harkness of the San Diego Supercomputing Center.

It took the researchers nearly a decade to produce the extraordinarily complex computer code that drove the simulation, which incorporated virtually all of the known physical conditions of the universe reaching back in time almost to the Big Bang, said Burns. The simulation -- which uses advanced numerical techniques to zoom-in on interesting structures in the universe -- modeled the motion of matter as it collapsed due to gravity and became dense enough to form cosmic filaments and galaxy structures.

"We see this as a real breakthrough in terms of technology and in scientific advancement," said Burns. "We believe this effort brings us a significant step closer to understanding the fundamental constituents of the universe."

According to the standard cosmological model, the universe consists of about 25 percent dark matter and 70 percent dark energy around 5 percent normal matter, said Burns. Normal matter consists primarily of baryons - hydrogen, helium and heavier elements -- and observations show that about 40 percent of the baryons are currently unaccounted for. Many astrophysicists believe the missing baryons are in the WHIM, Burns said.

"In the coming years, I believe these filaments may be detectable in the WHIM using new state-of-the-art telescopes," said Burns, who along with Hallman is a fellow at CU-Boulder's Center for Astrophysics and Space Astronomy. "We think that as we begin to see these filaments and understand their nature, we will learn more about the missing baryons in the universe."

Two of the key telescopes that astrophysicists will use in their search for the WHIM are the 10-meter South Pole Telescope in Antarctica and the 25-meter Cornell-Caltech Atacama Telescope, or CCAT, being built in Chile's Atacama Desert, Burns said. CU-Boulder scientists are partners in both observatories.

The CCAT telescope will gather radiation from sub-millimeter wavelengths, which are longer than infrared waves but shorter than radio waves. It will enable astronomers to peer back in time to when galaxies first appeared -- just a billion years or so after the Big Bang -- allowing them to probe the infancy of the objects and the process by which they formed, said Burns.

The South Pole Telescope looks at millimeter, sub-millimeter and microwave wavelengths of the spectrum and is used to search for, among other things, cosmic microwave background radiation - the cooled remnants of the Big Bang, said Burns. Researchers hope to use the telescopes to estimate heating of the cosmic background radiation as it travels through the WHIM, using the radiation temperature changes as a tracer of sorts for the massive filaments.

The CU-Boulder-led team ran the computer code for a total of about 500,000 processor hours at two supercomputing centers --the San Diego Supercomputer Center and the National Center for Supercomputing Applications at the University of Illinois at Urbana-Champaign. The team generated about 60 terabytes of data during the calculations, equivalent to three-to-four times the digital text in all the volumes in the U.S. Library of Congress, said Burns.

Burns said the sophisticated computer code used for the universe simulation is similar in some respects to a code used for complex supercomputer simulations of Earth's atmosphere and climate change, since both investigations focus heavily on fluid dynamics.

Source: University of Colorado at Boulder