Earthquake Forecast Program Has Amazing Success Rate

A NASA-funded earthquake forecast program has an amazing track record. Published in 2002, the Rundle-Tiampo Forecast has accurately forecast the locations of 15 of California's 16 largest earthquakes this decade, including last week's tremors.

The 10-year forecast was developed by researchers at the University of Colorado (now at the University of California, Davis) and from NASA's Jet Propulsion Laboratory, Pasadena, Calif. NASA and the U.S. Department of Energy funded it.

"We're elated our computer modeling technique has revealed a relationship between past and future earthquake locations," said Dr. John Rundle, director of the Computational Science and Engineering initiative at the University of California, Davis. He leads the group that developed the forecast scorecard. "We're nearly batting a thousand, and that's a powerful validation of the promise this forecasting technique holds."

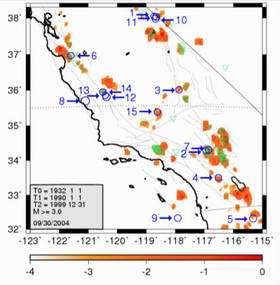

Of 16 earthquakes of magnitude 5 and higher since Jan. 1, 2000, 15 fall on "hotspots" identified by the forecasting approach. Twelve of the 16 quakes occurred after the paper was published in Proceedings of the National Academy of Sciences in Feb. 2002. The scorecard uses records of earthquakes from 1932 onward to predict locations most likely to have quakes of magnitude 5 or greater between 2000 and 2010. According to Rundle, small earthquakes of magnitude 3 and above may indicate stress is building up along a fault. While activity continues on most faults, some of those faults will show increasing numbers of small quakes, building up to a big quake, while some faults will appear to shut down. Both effects may herald the possible occurrence of large events.

The scorecard is one component of NASA's QuakeSim project. "QuakeSim seeks to develop tools for quake forecasting. It integrates high-precision, space-based measurements from global positioning system satellites and interferometric synthetic aperture radar (InSAR) with numerical simulations and pattern recognition techniques," said JPL's Dr. Andrea Donnellan, QuakeSim principal investigator. "It includes historical data, geological information and satellite data to make updated forecasts of quakes, similar to a weather forecast."

JPL software engineer Jay Parker said, "QuakeSim aims to accelerate the efforts of the international earthquake science community to better understand earthquake sources and develop innovative forecasting methods. We expect adding more types of data and analyses will lead to forecasts with substantially better precision than we have today."

The scorecard forecast generated a map of California from the San Francisco Bay area to the Mexican border, divided into approximately 4,000 boxes, or "tiles." For each tile, researchers calculated the seismic potential and assigned color-coding to show the areas most likely to experience quakes over a 10-year period.

"Essentially, we look at past data and perform math operations on it," said James Holliday, a University of California, Davis graduate student working on the project. Instrumental earthquake records are available for Southern California since 1932 and for Northern California since 1967. The scorecard gives more precision than a simple look at where quakes have occurred in the past, Rundle said.

"In California, quake activity happens at some level almost everywhere. This method narrows the locations of the largest future events to about six percent of the state," Rundle said. "This information will help engineers and government decision makers prioritize areas for further testing and seismic retrofits."

So far, the technique has missed only one earthquake -- a magnitude of 5.2 -- on June 15, 2004, under the ocean near San Clemente Island. Rundle believes this "miss" may be due to larger uncertainties in locating earthquakes in this offshore region of the state. San Clemente Island is at the edge of the coverage area for Southern California's seismograph network. Rundle and Holliday are working to refine the method and find new ways to visualize the data.

Other forecast collaborators include Kristy Tiampo, the University of Western Ontario, Canada; William Klein, Boston University, Boston; and Jorge S. Sa Martins, Universidad Federal Fluminense, Rio de Janeiro, Brazil.

Source: NASA