January 30, 2014 feature

3-D Air-Touch display operates on mobile devices

(Phys.org) —While interactive 3D systems such as the Wii and Kinect have been popular for several years, 3D technology is yet to become part of mobile devices. Researchers are working on it, however, with one of the most recent papers demonstrating a 3D "Air-Touch" system that allows users to touch floating 3D images displayed by a mobile device. Optical sensors embedded in the display pixels can sense the movement of a bare finger in the 3D space above the device, leading to a number of novel applications.

The researchers, Guo-Zhen Wang, et al., from National Chiao Tung University in Taiwan, have published a paper on the 3D Air-Touch system in a recent issue of the IEEE's Journal of Display Technology.

"The 3D Air-Touch system in mobile devices can offer non-contact finger detection and limited viewpoint for operating on a floating image, which can be applied to 3D games, interactive digital signage and so on," Wang told Phys.org. "Although current technology still has some issues, such as yield rate, sensor uniformity and so on, we predict that this technology could become available in the near future."

Because of the small size and portable nature of mobile devices, implementing a 3D system on these devices is different from 3D systems used on TVs and other large screens. Often, large 3D systems require either additional bulky devices or cameras for motion detection. For mobile systems, these additional devices would be inconvenient and the cameras have a limited field of view for detecting objects in close proximity to the display. Some proposed 3D systems for mobile devices use sensors near the screen, but these systems require bright environmental lighting, so they don't work well in dark conditions.

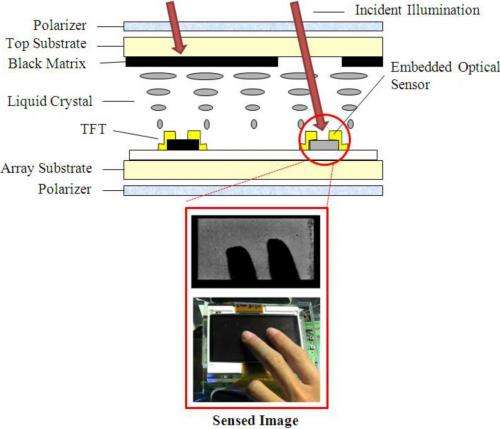

Working around these restrictions, Wang, et al., designed a 3D system in a 4-inch display screen in which optical sensors are embedded directly into the display pixels, while an infrared backlight is incorporated into the device itself. The researchers also added angular scanning illuminators to the edges of the display to provide adequate lighting. Overall, these three components provide a 3D system that is compact, has a wide field of view, and is independent of ambient conditions.

The researchers explain that the algorithm for calculating the 3-axis (x, y, z) position of the fingertip is less complex than that used for image processing, allowing for rapid real-time calculations. First, the infrared backlight and the optical sensors are used to determine the 2D (x, y) position of the fingertip. Then to calculate the depth of the fingertip, the angular illuminators emit infrared light at different tilt angles. An analysis of the accumulated intensity at different regions provides the scanning angle with maximum reflectance, resulting in the 3D location of the fingertip.

Experimental results showed that the prototype 3D Air-Touch system performed very well. 2D touch systems require that the maximum error in positioning be no more than 0.5 cm, and the 3D touch prototype has a maximum error of 0.45 cm at large depths, and smaller errors for smaller depths. The prototype's depth range is 3 cm, but the researchers predict that this range can be further increased by improving the sensor sensitivity and scanning resolution.

In the future, the 3D touch interface might also be extended from single-touch to multi-touch functionality, which could enable more applications. However, multi-touch functionality will require overcoming the occlusion effect, which occurs when one fingertip blocks the second fingertip so that the sensors cannot distinguish between the two. The researchers also plan to work on 3D Air-gesture operation for making 3D signatures in mobile devices.

More information: Guo-Zhen Wang, et al. "Bare Finger 3D Air-Touch System Using an Embedded Optical Sensor Array for Mobile Displays." Journal of Display Technology, Vol. 10, No. 1, January 2014. DOI: 10.1109/JDT.2013.2277567

© 2014 Phys.org. All rights reserved.