Team created techniques to analyze thousands of hours of NASA tape

NASA recorded thousands of hours of audio from the Apollo lunar missions, yet most of us have only been able to hear the highlights.

The agency recorded all communications between the astronauts, mission control specialists and back-room support staff during the historic moon missions in addition to Neil Armstrong's famous quotes from Apollo 11 in July 1969.

Most of the audio remained in storage on outdated analog tapes for decades until researchers at The University of Texas at Dallas launched a project to analyze the audio and make it accessible to the public.

Researchers at the Center for Robust Speech Systems (CRSS) in the Erik Jonsson School of Engineering and Computer Science (ECS) received a National Science Foundation grant in 2012 to develop speech-processing techniques to reconstruct and transform the massive archive of audio into Explore Apollo, a website that provides public access to the materials. The project, in collaboration with the University of Maryland, included audio from all of Apollo 11 and most of the Apollo 13, Apollo 1 and Gemini 8 missions.

A Giant Technological Leap

Transcribing and reconstructing the huge audio archive would require a giant leap in speech processing and language technology.For instance, the communications were captured on more than 200 14-hour analog tapes, each with 30 tracks of audio. The solution would need to decipher communication with garbled speech, technical interference and overlapping audio loops. Imagine Apple's Siri trying to transcribe discussions amid random interruptions and as many as 35 people in different locations, often speaking with regional Texas accents.

The project, led by CRSS founder and director Dr. John H.L. Hansenand research scientist Dr. Abhijeet Sangwan, included a team of doctoral students who worked to establish solutions to digitize and organize the audio. They also developed algorithms to process, recognize and analyze the audio to determine who said what and when. The algorithms are described in the November issue of IEEE/ACM Transactions on Audio, Speech, and Language Processing.

Seven undergraduate senior design teams supervised by the CRSS helped create Explore Apollo to make the information publicly accessible. The project also received assistance from the University's Science and Engineering Education Center (SEEC), which evaluated the Explore Apollo site.

Five years later, the team is completing its work, which has led to advances in technology to convert speech to text, analyze speakers and understand how people collaborated to accomplish the missions.

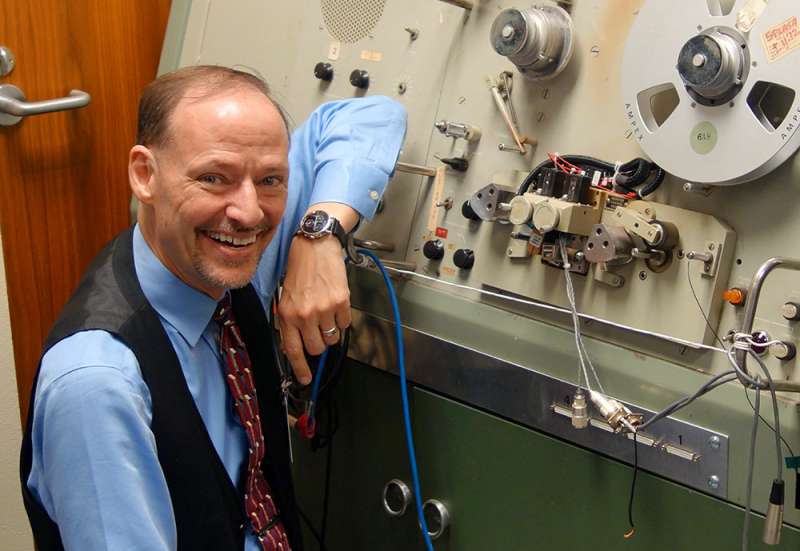

"CRSS has made significant advancements in machine learning and knowledge extraction to assess human interaction for one of the most challenging engineering tasks in the history of mankind," said Hansen, associate dean for research in ECS, professor of electrical and computer engineering, professor in the School of Behavioral and Brain Sciences, and Distinguished Chair in Telecommunications.Refining Retro Equipment

When they began their work, researchers discovered that the first thing they needed to do was to digitize the audio. Transferring the audio to a digital format proved to be an engineering feat itself. The only way to play the reels was on a 1960s piece of equipment at the NASA Johnson Space Center in Houston called a SoundScriber.

"NASA pointed us to the SoundScriber and said do what you need to do," Hansen said.

The device could read only one track at a time. The user had to mechanically rotate a handle to move the tape read head from one track to another. By Hansen's estimate, it would take at least 170 years to digitize just the Apollo 11 mission audio using the technology.

"We couldn't use that system, so we had to design a new one," Hansen said. "We designed our own 30-track read head, and built a parallel solution to capture all 30 tracks at one time. This is the only solution that exists on the planet."

The new read head cut the digitization process from years to months. That task became the job of Tuan Nguyen, a biomedical engineering senior who spent a semester working in Houston.

Amplifying Voices of Heroes Behind the Heroes

Once they transferred the audio from reels to digital files, researchers needed to create software that could detect speech activity, including tracking each person speaking and what they said and when, a process called diarization. They also needed to track speaker characteristics to help researchers analyze how people react in tense situations. In addition, the tapes included audio from various channels that needed to be placed in chronological order.

The researchers who worked on the project said one of the most challenging parts was figuring out how things worked at NASA during the missions so they could understand how to reconstruct the massive amount of audio.

"This is not something you can learn in a class," said Chengzhu Yu PhD'17. Yu began his doctoral program at the start of the project and graduated last spring. Now, he works as a research scientist focusing on speech recognition technology at Tencent's artificial intelligence research center in Seattle.

Lakshmish Kaushik, a PhD student who left a previous job to work on the project, also aims to devote his career to speech recognition technology. His role was to develop algorithms that distinguished the array of voices on multiple channels.

"The last four years have been really exhilarating," Kaushik said.

The team has demonstrated the interactive website at the Perot Museum of Nature and Science in Dallas. For Hansen, the project has been a chance to highlight the work of the many people involved in the lunar missions beyond the astronauts.

"When one thinks of Apollo, we gravitate to the enormous contributions of the astronauts who clearly deserve our praise and admiration.

However, the heroes behind the heroes represent the countless engineers, scientists and specialists who brought their STEM-based experience together collectively to ensure the success of the Apollo program," Hansen said. "Let's hope that students today continue to commit their experience in STEM fields to address tomorrow's challenges."

More information: Chengzhu Yu et al, Active Learning Based Constrained Clustering For Speaker Diarization, IEEE/ACM Transactions on Audio, Speech, and Language Processing (2017). DOI: 10.1109/TASLP.2017.2747097

Provided by University of Texas at Dallas