July 31, 2017 feature

What's the best way to rank research institutes?

(Phys.org)—Assessing and ranking research institutes is important for awarding grants, recruiting employees, promoting institutes, and other reasons. But finding a fair and accurate method for assessing the performance of research institutes is challenging due to the many factors involved, such as the number of published papers and citations, the unreliability of some citations, and the fact that many papers have multiple authors from different institutes with unequal contributions.

In a new study published in EPL, a team of scientists from China whose members study complex systems, data science, and physics has developed a new approach called the credit allocation method (CAM) for ranking research institutes that accounts for all of these factors by using many thousands of directed networks.

"Different from other metrics based on citations, our work considers the citation network structure and provides one way to rank the credit for research institutes for different research fields from the viewpoint of academic reputation," coauthor Jian-Guo Liu, at the University of Shanghai for Science and Technology, the Shanghai University of Finance and Economics, and the University of Fribourg in Switzerland, told Phys.org.

The basic idea is that each directed network consists of one randomly chosen paper that is linked to all of the papers that have cited that paper. Then each of these citing papers is linked to all of the other papers that it cites, as long as those papers have at least one author from one of the same research institutes as the original paper. By using a formula that accounts for the order of each research institute (those listed first in the paper receive more credit than those listed later), the researchers computed the credit allocated to each research institute due to the original paper.

After repeating this process for nearly half a million papers in the field of physics, with authors from approximately 19,000 research institutes, the researchers considered another problem that makes the assessment of research institutes difficult: the citation data is often unreliable. The researchers refer to a recent study that found that more than 30% of research papers had at least one incorrect citation, and that 10% of all citations were incorrect, meaning the papers cited did not clearly support the statements they were meant to support. To address this problem, the researchers randomly rewired some of the citation links in the networks, creating an artificial disturbance intended to model the inaccuracies in the citation data.

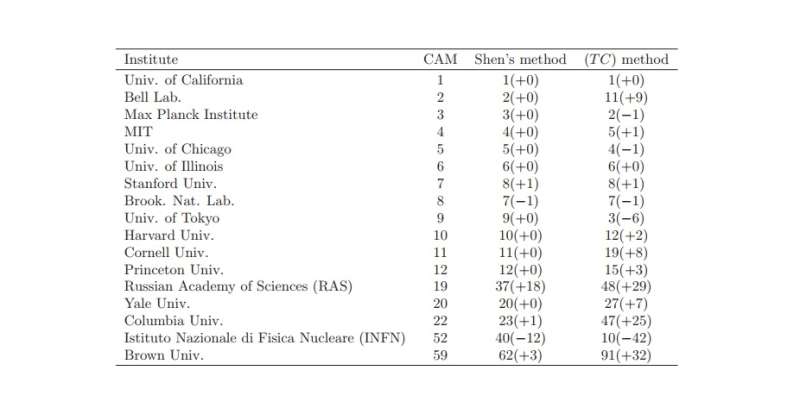

In the final rankings, many of the top-ranked physics research institutes identified by the new method corresponded to institutes with high reputations. The top four overall were the University of California, Bell Labs, the Max Planck Institute, and MIT. The top four in China were the University of Science and Technology of China, Nanjing University, Peking University, and Tsinghua University.

Although the researchers showed that the new method outperforms other methods of assessing research institutes, they note that it has some shortcomings. In particular, it does not account for the fact that older papers tend to have more citations than newer papers, so research institutes with longer histories tend to be ranked higher. The researchers plan to address this detail in the future by accounting for the age of the institution.

"In future work, we also plan to investigate the citation networks of some specific research fields, such as management science, complexity, statistical physics, and computer science, in order to rank the research institute credit of these fields," Liu said. "We also plan to develop a website to publish the ranking results for researchers all over the world."

More information: J.-P. Wang et al. "Credit allocation for research institutes." EPL. DOI: 10.1209/0295-5075/118/48001

Journal information: Europhysics Letters (EPL)

© 2017 Phys.org