HPC4MfG paper manufacturing project yields first results

Simulations run at the U.S. Department of Energy's Lawrence Berkeley National Laboratory as part of a unique collaboration with Lawrence Livermore National Laboratory and an industry consortium could help U.S. paper manufacturers significantly reduce production costs and increase energy efficiencies.

The project is one of the seedlings for the DOE's HPC for Manufacturing (HPC4Mfg) initiative, a multi-lab effort to use high performance computing to address complex challenges in U.S. manufacturing. Through HPC4Mfg, Berkeley Lab and LLNL are partnering with the Agenda 2020 Technology Alliance, a group of paper manufacturing companies that has a roadmap to reduce their energy use by 20 percent by 2020.

The papermaking industry ranks third among the country's largest energy users, behind only petroleum refining and chemical production, according to the U.S. Energy Information Administration. To address this issue, the LLNL and Berkeley Lab researchers are using advanced supercomputer modeling techniques to identify ways that paper manufacturers could reduce energy and water consumption during the papermaking process. The first phase of the project targeted "wet pressing"—an energy-intensive process in which water is removed by mechanical pressure from the wood pulp into press felts that help absorb water from the system like a sponge before it is sent through a drying process.

"The major purpose is to leverage our advanced simulation capabilities, high performance computing resources and industry paper press data to help develop integrated models to accurately simulate the water papering process," said Yue Hao, an LLNL scientist and a co-principal investigator on the project. "If we can increase the paper dryness after pressing and before the drying (stage), that would provide the opportunity for the paper industry to save energy."

If manufacturers could increase the paper's dryness by 10-15 percent, he added, it would save paper manufacturers up to 20 percent of the energy used in the drying stage—up to 80 trillion BTUs (thermal energy units) per year—and as much as $400 million for the industry annually.

Multi-scale, Multi-physics Modeling

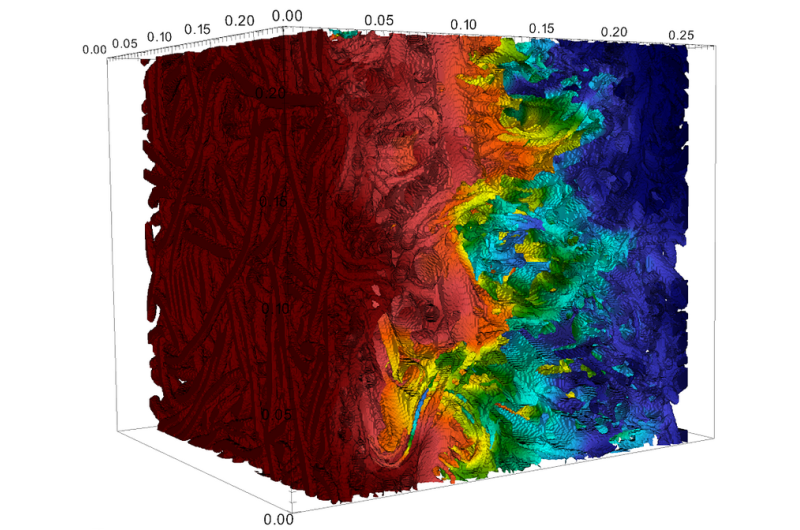

For the HPC4Mfg project, the researchers used a computer simulation framework, developed at LLNL, that integrates mechanical deformation and two-phase flow models, and a full-scale microscale flow model, developed at Berkeley Lab, to model the complex pore structures in the press felts.

Berkeley Lab's contribution centered around a flow and transport solver in complex geometries developed by David Trebotich, a computational scientist in the Computational Research Division at Berkeley Lab and co-PI on the project. This solver is based on the Chombo software libraries developed in the lab's Applied Numerical Algorithms Group and is the basis for other application codes including Chombo-Crunch, a subsurface flow and reactive transport code that has been used to study reactive transport processes associated with carbon sequestration and fracture evolution. This suite of simulation tools has been known to run at scale on supercomputers at Berkeley Lab's National Energy Research Scientific Computing Center (NERSC), a DOE Office of Science User Facility.

"I used the flow and transport solvers in Chombo-Crunch to model flow in paper press felt, which is used in the drying process," Trebotich explained. "The team at LLNL has an approach that can capture the larger scale pressing or deformation as well as the flow in bulk terms. However, not all of the intricacies of the felt and the paper are captured by this model, just the bulk properties of the flow and deformation. My job was to improve their modeling at the continuum scale by providing them with an upscaled permeability-to-porosity ratio from pore scale simulation data. "

Trebotich ran a series of production runs on NERSC's Edison system and was successful in providing his LLNL colleagues with numbers from these microscale simulations at compressed and uncompressed pressures, which improved their model, he added.

"This was true 'HPC for manufacturing,'" Trebotich said, noting that the team recently released its final report on the first phase of the pilot project. "We used 50,000-60,000 cores at NERSC to do these simulations. It's one thing to take a research code and tune it for a specific application, but it's another thing to make it effective for industry purposes. Through this project we have been able to help engineering-scale models be more accurate by informing better parameterizations from micro-scale data."

Going forward, to create a more accurate and reliable computational model and develop a better understanding of these complex phenomena, the researchers say they need to acquire more complete data from the industry, such as paper material properties, high-resolution micro-CT images of paper and experimental data derived from scientifically controlled dewatering tests.

"The scientific challenge is that we need to develop a fundamental understanding of how water flows and migrates," Hao said. "All the physical phenomena involved make this problem a tough one because the dewatering process isn't fully understood due to lack of sufficient data."

Provided by Lawrence Berkeley National Laboratory