Automatic live subtitling system being trialed in academic conferences

An automatic subtitling system powered by the speech recognition technology which is under development at Kyoto University made its debut on 22 August at the inaugural meeting of the Assistive and Accessible Computing (AAC) Study Group of the Information Processing Society of Japan (IPSJ). The system is intended to help people with hearing difficulties and is scheduled to be trialed over an extended term in academic conferences.

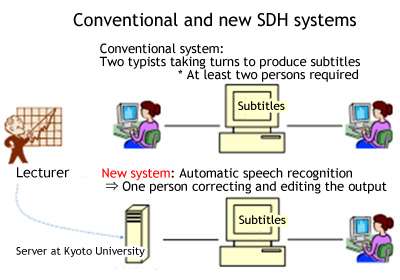

Long-term trial use of a high-accuracy speech recognition system in academic conferences represents an unprecedented step which it is hoped will eventually lead to the establishment of a dramatically lower-cost alternative to the conventional method of providing subtitles for the deaf and hard-of-hearing (SDH), which involves two or more specially trained and experienced typists working together.

A new accessibility law scheduled to take effect in Japan next year mandates provision of "reasonable accommodation" to persons with disabilities. With the number of students with special needs rising steadily, universities and conference organizers are under increasing pressure to find ways to ensure accessibility of their lectures and presentations. In the context of assisting deaf and hard-of-hearing people, this usually means providing subtitles and text summaries. However, persons capable of summarizing highly technical information and typing it out in real time continue to be in limited supply, posing a serious human resources challenge for many universities and academic institutions.

Against this backdrop, two KU researchers working on natural speech recognition—Professor Tatsuya Kawahara of the Graduate School of Informatics and Dr Yuya Akita, a Lecturer at the Graduate School of Economics—have succeeded in developing a system that automatically generates subtitles for speeches and lectures as they are delivered.

In order to achieve the highest possible degree of accuracy, the speech recognition system needs to be customized in advance by familiarizing it with the vocabulary and topic of the talk in question, requiring the operator to obtain the proceedings paper beforehand. In addition, the output will inevitably contain some errors that need to be manually corrected.

That said, for a reasonably successful session, the amount of correction required should be within the capacity of a single person, making the procedure much less expensive—less than one-tenth by some estimates—than manual subtitling.

Through trialing the system in academic conferences on a continuous basis with the cooperation of participating speakers—who will be asked to submit proceedings papers in advance, speak slowly and clearly, and repeat questions from the audience—the project team is hoping to keep improving the performance of the system and to continue accumulating operational know-how.

Provided by Kyoto University