4-D movies capture every jiggle, creating realistic digital avatars

"Everybody jiggles" according Dr. Michael Black, Director at the Max Planck Institute for Intelligent Systems (MPI-IS), in Tübingen Germany. We may not like it, but how we jiggle says a lot about who we are. Our soft tissue (otherwise known as fat and muscle) deforms, wobbles, waves, and bounces as we move. These motions may provide clues about our risk for cardiovascular disease and diabetes. They also make us look real. Digital characters either lack natural soft-tissue motion or require time-consuming animation to make them believable. Now researchers at MPI-IS have captured people and how they jiggle in exacting detail and have created realistic 3D avatars that bring natural body motions to digital characters.

To put jiggling under a microscope, the researchers needed a new type of scanner to capture 3D body shape as it is deforming. Most body scanners capture the static shape of the body. The new MPI scanner is actually made of 66 cameras and special projectors that shine a speckled pattern on a subject's body. The system uses stereo, something like what humans do to see depth but, in this case, with 66 digital eyes. Dr. Javier Romero, a research scientist at MPI, says the system captures high-speed "4D movies" of 3D body shape, where the 4th dimension is time. The system captures 3D body shapes with about 150,000 3D points 60 times a second. According to Romero, this level of detail shows "ripples and waves propagating through soft tissue," i.e. jiggle. The system is the world's first full-body 4D scanner (ps.is.tuebingen.mpg.de/dynamic_capture) and was custom built by 3dMD Systems (www.3dmd.com/), a leader in medical grade scanning systems.

To analyze the data, it must first be put "into correspondence" says Naureen Mahmood, one of the study's co-authors. The researchers take an average 3D body shape and deform it to match every frame in the 4D movie. The process, called 4Cap, tracks points on the body across time enabling their motion to be analyzed. Romero says that this is similar to motion capture, or "mocap," commonly used in the animation industry except that mocap systems typically track around 50 points on the body using special markers while 4Cap tracks 15,000 points without any markers.

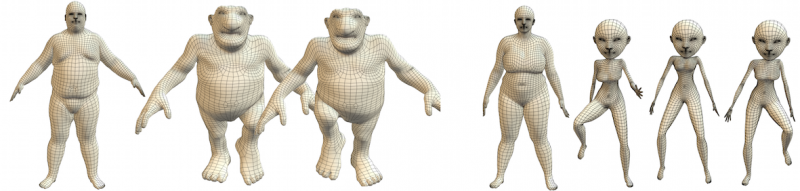

With this data, Dr. Gerard Pons-Moll, a post doctoral researcher at MPI has created Dyna, a method to make highly-realistic 3D avatars that move and jiggle like real people. Soft-tissue motions are critical to creating believable characters that look alive. "If it doesn't jiggle it's not human," say Pons-Moll. Applying machine learning algorithms, the team measured the statistics of soft tissue motion and how these motions vary from person to person. Depending on the body-mass index (BMI) of the avatar, their soft tissue will move differently.

Animating soft-tissue motion is typically difficult. Previous methods either use unrealistic physical simulations or require extensive hand animation. With Dyna, animators only need to control the sequence of poses of the body and then the soft-tissue dynamics are estimated automatically. "Because Dyna is a mathematic model, we can apply it to fantasy characters as well," says Pons-Moll. Dyna can take the jiggle of a heavy-set person and transfer it to a thin person or an ogre, troll, or other cartoon characters. Previous animation methods required complex physics simulations. Dyna simplifies this and is efficient to use in animating characters for movies or games. Animators can even edit the motions to exaggerate effects or change how a body deforms.

Dyna is not just for games but might one day be used in your doctor's office. "How your fat jiggles provides information about where it is located and whether it is dangerous" says Black. By tracking, modeling, and analyzing body fat in motion, Black sees the opportunity to non-invasively get an idea of what is under our skin. One day determining your risk for heart disease or diabetes might involve jumping around in front of a camera.

The work appears at ACM SIGGRAPH in Los Angeles, August 11, 2015. SIGGRAPH is the leading conference for research in computer graphics.

More information: "Dyna: A Model of Dynamic Human Shape in Motion." ACM Transactions on Graphics, (Proc. SIGGRAPH), 2015, Volume 34 Issue 4, August 2015 dl.acm.org/citation.cfm?id=2766993

Project page: dyna.is.tue.mpg.de/

.jpg)