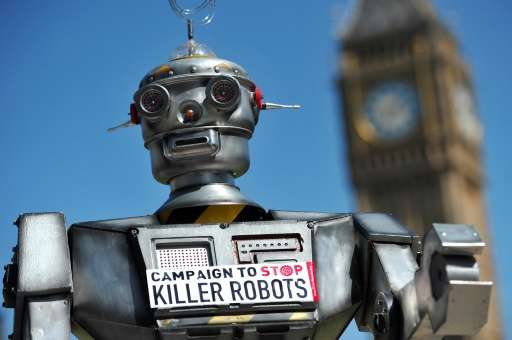

No sci-fi joke: 'killer robots' strike fear into tech leaders

It sounds like a science-fiction nightmare. But "killer robots" have the likes of British scientist Stephen Hawking and Apple co-founder Steve Wozniak fretting, and warning they could fuel ethnic cleansing and an arms race.

Autonomous weapons, which use artificial intelligence to select targets without human intervention, have been described as "the third revolution in warfare, after gunpowder and nuclear arms," around 1,000 technology chiefs wrote in an open letter.

Unlike drones, which require a human hand in their action, this kind of robot would have some autonomous decision-making ability and capacity to act.

"The key question for humanity today is whether to start a global AI (artificial intelligence) arms race or to prevent it from starting," they wrote.

"If any major military power pushes ahead with AI weapon development, a global arms race is virtually inevitable," said the letter released at the opening of the 2015 International Joint Conference on Artificial Intelligence in Buenos Aires.

The idea of an automated killing machine—made famous by Arnold Schwarzenegger's "Terminator"—is moving swiftly from science fiction to reality, according to the scientists.

"The deployment of such systems is—practically if not legally—feasible within years, not decades," the letter said.

Ethnic cleansing made easier?

The scientists painted the doomsday scenario of autonomous weapons falling into the hands of terrorists, dictators or warlords hoping to carry out ethnic cleansing.

"There are many ways in which AI can make battlefields safer for humans, especially civilians, without creating new tools for killing people," the letter said.

In addition, the development of such weapons, while potentially reducing the extent of battlefield casualties, might also lower the threshold for going to battle, noted the scientists.

The group concluded with an appeal for a "ban on offensive autonomous weapons beyond meaningful human control."

Elon Musk, the billionaire co-founder of PayPal and head of SpaceX, a private space-travel technology venture, also urged the public to join the campaign.

"If you're against a military AI arms race, please sign this open letter," tweeted the tech boss.

Threat or not?

Sounding a touch more moderate, however, was Australia's Toby Walsh.

The artificial intelligence professor at NICTA and the University of New South Wales noted that all technologies have potential for being used for good and evil ends.

Ricardo Rodriguez, an AI researcher at the University of Buenos Aires, also said worries could be overstated.

"Hawking believes that we are closing in on the Apocalypse with robots, and that in the end, AI will be competing with human intelligence," he said.

"But the fact is that we are far from making killer military robots."

Authorities are gradually waking up to the risk of robot wars.

Last May, for the first time, governments began talks on so-called "lethal autonomous weapons systems."

In 2012, Washington imposed a 10-year human control requirement on automated weapons, welcomed by campaigners even though they said it should go further.

There have been examples of weapons being stopped in their infancy.

After UN-backed talks, blinding laser weapons were banned in 1998 before they ever hit the battlefield.

© 2015 AFP