Team develops camera that uses sensors with just 1,000 pixels

Thanks to the `megapixel wars', we are used to cameras with 10s of megapixels. Sensors in our cell phone and SLRs are made of Silicon (Si), which is sensitive to the visible wavebands of light and hence, useful for consumer photography. The abundance of Silicon, coupled with advances in CMOS-based fabrication has helped drive down the cost of sensors while simultaneously providing increased capabilities in terms of sensors with higher and higher resolutions.

In many wavebands that are outside Si's sensitivity, sensing can be very expensive. Two examples of these are in short-wave infrared (0.9-3 microns) and mid-wave infrared (3-8 microns); the cost of a megapixel sensor in both wavebands is typically in 10s of thousands of dollars. As a consequence, high-resolution short-wave and mid-wave infrared sensors are too expensive to be affordable for the average consumer.

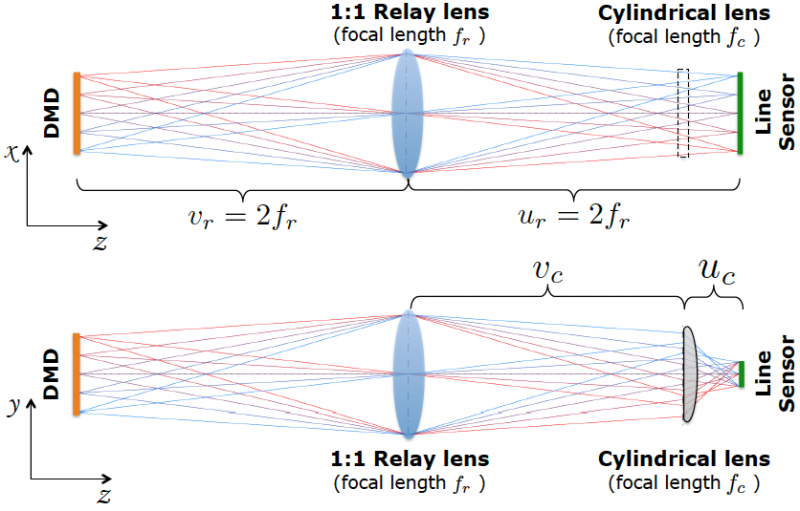

Researchers at CMU and Columbia University have developed a new camera, called LiSens, that uses a sensor with just a thousand pixels, but produces images and videos at (nearly) a mega-pixel resolution. In other words, LiSens takes a low-resolution sensor and by the use of novel optic makes it capable of sensing scenes at a resolution that is higher than that of the sensor. This is achieved by focusing the scene onto a digital micro-mirror array (DMD) and, subsequently, focusing the DMD onto the low-resolution sensor. The DMD is an array of tiny mirrors that can direct light towards or away from the sensor.

In the case of LiSens, we use a linear array of sensing elements or a line-sensor such that each pixel on the sensor can sum together light on an entire column of the DMD. Given the programmable nature of the DMD and its ability to block light at desired pixels, each measurement obtained by the line-sensor is a coded sum along a line in the scene. Given multiple such measurements with different codes, we can recover the image focused on the DMD at its full-resolution.

A measurement obtained by the line-sensor is quite unlike a traditional image. However, we can design algorithms that, armed with the knowledge of the scene-to-sensor mapping, can invert these measurements obtained by the line-sensor and compute an image of the scene. With this, we can now sense scenes at high-resolution (in our prototype, 1024x768 pixels) in spite of having a low-resolution sensor (in our prototype, 1024 pixels).

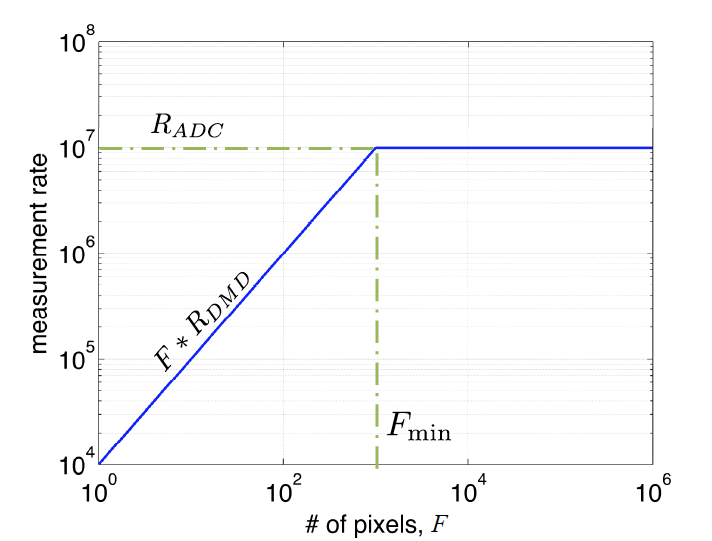

LiSens builds on the so-called single pixel camera (SPC), which uses a single photodetector to sense the scene. LiSens can be interpreted as a multi-pixel extension of the SPC. LiSens delivers measurement rates that are nearly 100-1000 times that of the SPC; this allows LiSens to sense scenes at significantly higher spatial and temporal resolutions. The price that we pay is a moderate increase in the cost of the sensor.

Aswin Sankaranarayanan's work was published in The 2015 International Conference on Computational Photography held in Houston, TX.