Algorithm automatically cuts boring parts from long videos

Smartphones, GoPro cameras and Google Glass are making it easy for anyone to shoot video anywhere. But, they do not make it any easier to watch the tedious videos that can result. Carnegie Mellon University computer scientists, however, have invented a video highlighting technique that can automatically pick out the good parts.

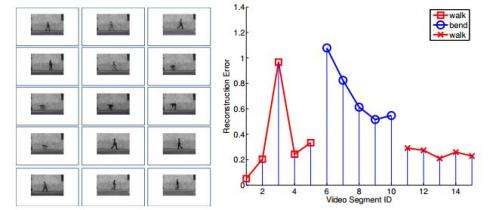

Called LiveLight, this method constantly evaluates action in the video, looking for visual novelty and ignoring repetitive or eventless sequences, to create a summary that enables a viewer to get the gist of what happened. What it produces is a miniature video trailer. Although not yet comparable to a professionally edited video, it can help people quickly review a long video of an event, a security camera feed, or video from a police cruiser's windshield camera.

A particularly cool application is using LiveLight to automatically digest videos from, say, GoPro or Google Glass, and quickly upload thumbnail trailers to social media. The summarization process thus avoids generating costly Internet data charges and tedious manual editing on long videos. This application, along with the surveillance camera auto-summarization, is now being developed for the retail market by PanOptus Inc., a startup founded by the inventors of LiveLight.

The LiveLight video summary occurs in "quasi-real-time," with just a single pass through the video. It's not instantaneous, but it doesn't take long—LiveLight might take 1-2 hours to process one hour of raw video and can do so on a conventional laptop. With a more powerful backend computing facility, production time can be shortened to mere minutes, according to the researchers.

Eric P. Xing, professor of machine learning, and Bin Zhao, a Ph.D. student in the Machine Learning Department, will present their work on LiveLight June 26 at the Computer Vision and Pattern Recognition Conference in Columbus, Ohio. Example videos and summaries are available online at http://supan.pc.cs.cmu.edu:8080/VideoSummarization/.

"The algorithm never looks back," said Zhao, whose research specialty is computer vision. Rather, as the algorithm processes the video, it compiles a dictionary of its content. The algorithm then uses the learned dictionary to decide in a very efficient way if a newly seen segment is similar to previously observed events, such as routine traffic on a highway. Segments thus identified as trivial recurrences or eventless are excluded from the summary. Novel sequences not appearing in the learned dictionary, such as an erratic car, or a traffic accident, would be included in the summary.

Though LiveLight can produce these summaries automatically, people also can be included in the loop for compiling the summary. In that instance, Zhao said LiveLight provides a ranked list of novel sequences for a human editor to consider for the final video. In addition to selecting the sequences, a human editor might choose to restore some of the footage deemed worthless to provide context or visual transitions before and after the sequences of interest.

"We see this as potentially the ultimate unmanned tool for unlocking video data," Xing said. Video has never been easier for the average person to shoot, but reviewing and tagging the raw video remains so tedious that ever larger volumes of video are going unwatched or discarded. The interesting moments captured in those videos thus go unseen and unappreciated, he added.

The ability to detect unusual behaviors amidst long stretches of tedious video could also be a boon to security firms that monitor and review surveillance camera video.

More information: Bin Zhao and Eric P. Xing. Quasi Real-Time Summarization for Consumer Videos, Proceedings of the 26th IEEE Conference on Computer Vision and Pattern Recognition (CVPR 2014). www.cs.cmu.edu/~epxing/papers/ … hao_Xing_cvpr14a.pdf

Provided by Carnegie Mellon University