Does Skype help or hinder communication?

Speech is the one of the most important forms of communication between humans.

The internet has opened doors for us to communicate with people across the globe – but the technology often leads to misunderstanding.

As pointed out by Naomi Harte of Trinity College in a recent presentation delivered to the Science Gallery Dublin, communicating effectively through technology is a lot more complex than you may imagine.

The quality of the sound we hear, the images we see and the emotions that speech convey, matter greatly for the creation of valid meaning. This complexity is often not captured when communicating through the internet and with machines.

Lost in translation

Whether it be a Skype call, or giving a voice command to your smartphone, communicating through technology can be tricky.

Anything that disrupts our ordinary speech rhythms, as well as the way we process tone of voice, facial expression and other physiological cues, can affect interpretation of the speech act and transform meaning.

Understanding the complex ways in which we communicate can help us develop technologies which will improve online exchanges and reduce misunderstandings, so engineers and researchers such as Harte have been focused on two ways to improve digital communication:

- improving digital speech quality

- transmitting emotion in human-computer interactions and internet telephony.

- Improving speech quality

In many situations where humans and gadgets try to talk to each other – through dialogue systems, e-books, tablets, mobile phones and computer games – it is the machine which struggles to understand the spoken cues and then formulate an intelligible and natural sounding response.

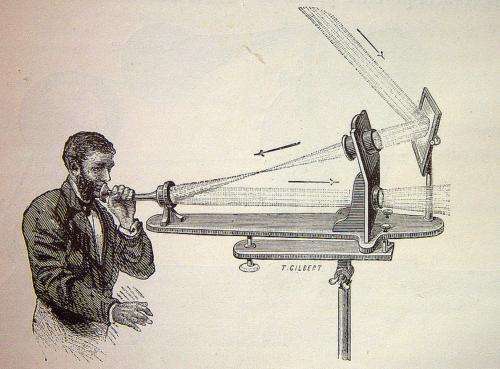

Researchers are trying to improve human-to-human communication by enhancing how the multimedia capabilities of the internet function together simultaneously. At Trinity College Harte is currently working on a project to improve something called "Audio-Visual Speech Recognition".

This means using visual data such as tracking lip movements to improve speech recognition and thus the audio signal. Using similar mechanisms the research group Sigmedia hope to improve human-to-machine communication by having machines sense lip and eye movements, gesture and voice.

Transmitting emotions

The other major challenge to improving speech technologies is related to the emotional content of speech.

Designers and researchers know this – but the field of affective computing (computing that influences emotion) is relatively young. At this point speech recognition systems are mostly inadequate to the task of conveying and recognising emotion.

This makes these applications both less user friendly and less effective.

For these systems to improve there must be research into how to create a framework for the classification of emotional signals, in particular given that they vary greatly across cultures. Fusing audio and visual cues and accounting for cultural and situational variation is key to this process.

Researchers must ask how well their system functions when speech is informal, people are speaking in a second languages, or when the speakers are emotionally influenced.

The importance of non-verbal cues

Understanding a spoken message also depends on what we see at the time.

To illustrate this point, Harte combines an identical sound with two videos of lips mouthing different phrases.

Although the sound remains the same, the audience believes they have heard two different words – even after she explains the trick (check out the video below to try it for yourself).

Essentially, the same sound will be heard differently depending on the visual signal. This phenomenon is called the "McGurk effect", and shows that speech is seen as well as heard.

This example points to a fact that anthropologists, psychologists, mothers and salespeople know well: non-verbal cues like tone of voice and gesture texture our understanding of any speech-act.

This has to be taken into account when communicating digitally.

Even speech itself is hard to understand without context. In addition, the interpretation of the context changes according to our cultural background. The tempo and rhythm of our speech, how long we pause and how long we wait after someone has spoken before initiating a response differ across cultures.

In some languages, the time we would wait before we respond is much longer than others, where it is customary to overlap our response with the end of another persons statement. This effects communication to such a great extent that a native English speaker choosing to speak in Spanish will mostly abide by the customary patterns of English and vice versa.

A time lag during a Skype voice call can thus intensify misunderstanding and dissonance in inter-cultural communications. And this may be exaggerated by the quality of the audio or video signal.

Does digital communication have a place in business?

There is a general belief that investment in communications technology can cut the cost of international business and collaboration.

Thus Google hangouts, Skype and Facebook video are increasingly used for professional purposes such as conferences, international meetings, student lessons and supervision.

There are many documented examples of success, failures and misunderstandings.

Successful international business will probably continue to rely on handshakes, given the importance of physical presence in conveying emotion, creating trust and building empathy.

However, businesses also run on efficiency.

If technology can improve to the extent that it enables the processing of non-verbal gestures (such as lip and eye movements), then the reduced costs in terms of travel will continue to make it a lucrative area of academic research, technological investment and business practice.

One thing is certain, improvements will depend increasingly on the synthesis of multimedia capabilities and recognition of our cultural differences in communicating, interpreting and understanding one another.

Source: The Conversation

This story is published courtesy of The Conversation (under Creative Commons-Attribution/No derivatives).

![]()