Closing the last Bell-test loophole for photons

(Phys.org) —An international team of researchers has reached a milestone in experimental confirmation of a key tenet of quantum mechanics, using ultra-sensitive photon detectors devised by PML scientists.

The work, reported last month in Nature, eliminates the last remaining potential obstacle to a quantum-mechanical interpretation of results from observations of pairs of "entangled" photons. Entanglement is an exclusively quantum, non-classical phenomenon in which the properties of each photon in the pair are intrinsically correlated – no matter how far they are separated in space – but unknowable prior to the act of measurement.

Albert Einstein, among others, did not believe that was a complete description of nature, and argued that presumably entangled particles actually have inherent properties which are somehow hidden but which exist before measurement. This view has come to be known as "local realism."

In the 1960s, Irish physicist John Bell formulated a definitive test for entanglement, showing that if local realism is true, there are mathematical limits on the correlations between different measurements performed on the particles. If those constraints are exceeded, then the entangled particles are obeying purely quantum-mechanical rules.

In the years since, many "Bell tests" have been performed, but critics have identified several conditions (known as loopholes) in which the results could be considered inconclusive. For entangled photons, there have been three major loopholes; two were closed by previous experiments. The remaining problem, known as the "detection-efficiency/fair sampling loophole," results from the fact that, until now, the detectors employed in experiments have captured an insufficiently large fraction of the photons, and the photon sources have been insufficiently efficient. The validity of such experiments is thus dependent on the assumption that the detected photons are a statistically fair sample of all the photons. That, in turn, leaves open the possibility that, if all the photon data were known, they could be described by local realism.

The new research, conducted at the Institute for Quantum Optics and Quantum Communication in Austria, closes the fair-sampling loophole by using improved photon sources (spontaneous parametric down-conversion in a Sagnac configuration) and ultra-sensitive detectors provided by the Single Photonics and Quantum Information project in PML's Quantum Electronics and Photonics Division. That combination, the researchers write, was "crucial for achieving a sufficiently high collection efficiency," resulting in a high-accuracy data set – requiring no assumptions or correction of count rates – that confirmed quantum entanglement to nearly 70 standard deviations.

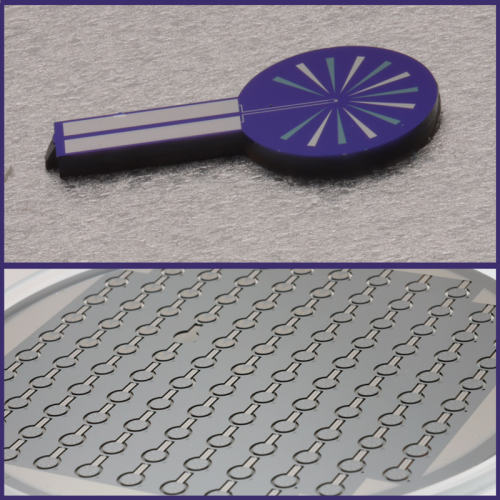

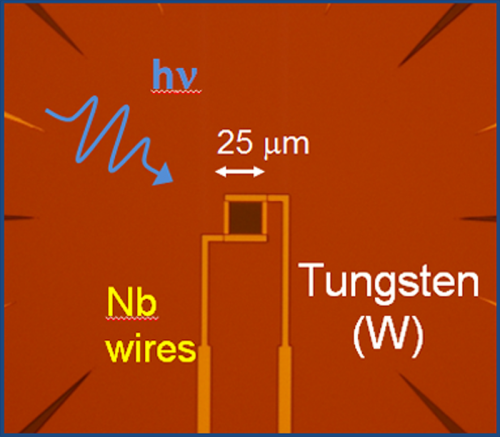

The photon detectors are transition-edge sensors (TESs), a design that provides single-photon detection efficiencies as high as 98%. Each unit, about 25 µm x 25 µm, contains a tungsten film 20 nm thick that is kept at a temperature (about 100 mK) right at the transition between the superconducting state and normal resistance. A small bias voltage is applied across the sensor. When an incident photon from a coupled optical fiber deposits energy in the tungsten film, the temperature rises, the resistance of the tungsten increases substantially, and the resulting current drop signals detection.

Not only are TES detectors highly efficient, but they are intrinsically free of spurious "dark counts" – detection signals that occur when no photon is present. "This combination," the researchers note, "is imperative for an experiment in which no correction of count rates can be tolerated."

For the experiment, Sae Woo Nam, project leader for Single Photonics and Quantum Information, and colleagues provided a set of TES detectors optimized for the 810 nm photons produced by the source. Each was carefully constructed to minimize losses that can result from packaging and source-to-detector fiber coupling. "Our packaging is one of the key enabling technologies that make the experiment possible," Nam says.

Another is the absence of dark counts. "In a test of local realism, the margin for error is very small." Nam says. "You can't correct for any errors because you can't be sure that whether it is truly an error or 'reality.' Only detectors such as the TES, which essentially have no intrinsic dark counts, can be used for the types of experiments that have been conducted to date with entangled photons."

The work was particularly demanding for the PML team in Boulder, Co because all the experiments were conducted in Vienna, Austria.

"The most challenging part of our role was the remote distance and waiting," Nam says. "We had some very nice results as early as November, 2010. However, reproducing the results after our initial work has been demanding because of changes in experimental apparatus in Vienna.

"In addition, the cryogenic systems for our TES detectors were from the European scientists, as opposed to systems that we have built and tested at NIST. Getting these new systems working without touching them has been a challenge for everyone."

If recent successes are predictive of the future, PML's TES technology will continue to provide state-of-the-art capabilities to numerous other experiments and research projects.

"The single-photon detectors developed by the NIST team have proven to be a game-changer for the quantum information research community around the world," says Robert Hickernell, Acting Chief of the Quantum Electronics and Photonics Division. "Many major research groups in the quantum optics field now rely on NIST's detectors, based on their record efficiency and superior cryogenics and packaging, for seminal results such as this closing of the fair-sampling loophole."

More information: www.nature.com/nature/journal/ … ull/nature12012.html

Journal information: Nature

Provided by National Institute of Standards and Technology