April 22, 2013 report

The emergence of complex behaviors through causal entropic forces

(Phys.org) —An ambitious new paper published in Physical Review Letters seeks to describe intelligence as a fundamentally thermodynamic process. The authors made an appeal to entropy to inspire a new formalism that has shown remarkable predictive power. To illustrate their principles they developed software called Entropica, which when applied to a broad class of rudimentary examples, efficiently leads to unexpectedly complex behaviors. By extending traditional definitions of entropic force, they demonstrate its influence on simulated examples of tool use, cooperation, and even stabilizing an upright pendulum.

The familiar concept of entropy which states that systems are biased to evolve towards greater disorder, gives little indication about exactly how they evolve. Recently, physicists have begun to explore the idea that proceeding in a direction of maximum instantaneous entropy production is only one among many ways to go. More generally, the authors now suggest that systems which show intelligence, uniformly maximize the total entropy produced over their entire path through configuration space between the present time and some future time.

In accordance with Fermat's original principle, for the simple case where light travels in a constant medium, the path which minimizes time is a straight line. If however, the second point under consideration is within a different medium, the shortest path partitions the time spent in either according their refractive indexes. By analogy to Fermat, this new and more general view of thermodynamic systems, looks at the total path, rather than just the current state of the system.

The first author on the paper, Alex Wissner-Gross, describes intelligent behavior as a way to maximize the capture of possible future histories of a particular system. Starting from a formalism known as the 'canonical ensemble' (which is basically a probability distribution of states) the authors ultimately derive a measure they call causal entropic forcing. When following a causal path, entropy is based not on the internal arrangements accessible to a system at any particular time, but rather on the number of arrangements it could pass through on the way to possible future states.

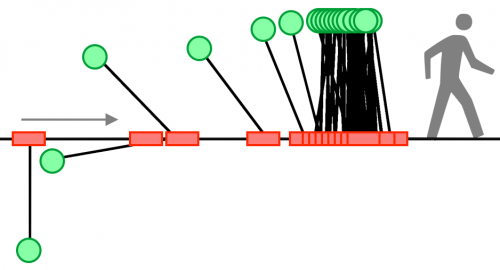

In a practical simulation of a particle in a box, for example, the effect of causal entropic forcing is to keep the particle in a relatively central location. This effect can be understood as the system maximizing the diversity of causal paths that would be accessible by Brownian motion within the box. The authors also simulated different sized disks diffusing in a 2D geometry. With application of the causal forcing function, the system rapidly produced behaviors were larger disks "used" smaller disks to release other disks from trapped locations. In different scenarios of this general paradigm, disks cooperated together to achieve seemingly improbable results.

Many of these kinds of behaviors might also be compared to activities we now know exist in the normal biochemical operations of cells. For example, enzymes use complex changes in conformation, and various small cofactors, to manipulate proteins and other molecules. The nucleus extrudes mRNAs through pores against entropic forces which tend to hold the polymer coiled up in the the interior. The speed and efficiency at which machines like ribosomes and polymeraces operate, suggests that effects other than just pure Brownian motion are responsible for delivery of their substrates and subsequently binding them with the selectivity that is observed.

The biggest imaginative leap of the paper involved simulating a rigid, inverted pendulum stabilized by a translating cart. The authors suggest that when operating under a causal forcing function, this systems bears rudimentary resemblance to achieving upright walking. While this example may appear more relevant to finding new ways to program walking robots, as opposed to understanding the transition to walking in hominids, the overall diversity of behaviors modeled with this formalism does not fail to impress.

The spontaneous emergence of complex behaviors now has a new tool which can be used to probe its possible origins. New methods of solving traditional challenges in artificial intelligence may also be investigated. Programming machines to play games like GO, where humans still appear to have the edge might also be make use of these methods. The Entropica simulation software is available in demo form from the authors website, as are other materials related to their new paper.

More information: Causal Entropic Forces, Phys. Rev. Lett. 110, 168702 (2013) prl.aps.org/abstract/PRL/v110/i16/e168702

Abstract

Recent advances in fields ranging from cosmology to computer science have hinted at a possible deep connection between intelligence and entropy maximization, but no formal physical relationship between them has yet been established. Here, we explicitly propose a first step toward such a relationship in the form of a causal generalization of entropic forces that we find can cause two defining behaviors of the human "cognitive niche"—tool use and social cooperation—to spontaneously emerge in simple physical systems. Our results suggest a potentially general thermodynamic model of adaptive behavior as a nonequilibrium process in open systems.

Journal information: Physical Review Letters

© 2013 Phys.org