Image processing: The human (still) beats the machine

(PhysOrg.com) -- A novel experiment conducted by researchers at Idiap Research Institute and Johns Hopkins University highlights some of the limitations of automatic image analysis systems. Their results were recently published in the early online edition of the Proceedings of the National Academy of Sciences.

Anyone with a relatively new digital camera has experienced it: the system that is supposed to automatically identify faces and smiles sometimes doesn’t work quite right. Patterns in a photo of a bookshelf or of leaves on a tree are often mistaken for faces.

Behind this nearly universal gadget are the results of years of “computer vision” research. When you frame a scene, the camera divides it into many small zones and tries to identify subtle differences in hue. A dark, vaguely horizontal band can indicate eyes and eyebrows – or the empty space above a series of books.

How can the camera make such glaring errors, mistakes that no human would ever commit? To try and grasp the mechanisms at work in the image analysis process, François Fleuret, Senior Scientist at EPFL and researcher at the Idiap research institute in Martigny, has developed, along with colleagues from Johns Hopkins University, a “simple” contest in which humans and machines compete. The experiment and its results have just been published in the advance online edition of PNAS (Proceedings of the National Academy of Sciences).

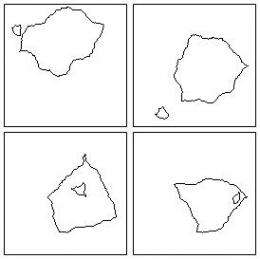

The candidates were presented with a series of small, square black and white images of random shapes, and asked to classify them into two “families,” discovering for themselves the classification criteria. For example, if one shape is inside another or if the two are side by side.

While the solution was often obvious for humans, who would understand the trick after just a few images, the computers frequently had to be shown several thousands of examples before reaching a satisfactory result. And even worse, one of the 24 puzzles couldn’t be figured out using machine analysis at all.

“We should remember that humans have had decades of experiential learning, in which they’re perceiving dozens of images per second, not to mention their genetic background. The computers are basically “blank slates” in comparison,” Fleuret remarks. By simplifying the images as much as possible, the scientists wanted to identify the main weaknesses of machine learning. “What we found, in a general sense, was that humans jump immediately to a semantic level of image analysis,” he continues. “He or she will say which pair of images is more crowded than another pair, where the computer will compare, for example, numerical values associated with the pixel density in a given perimeter.”

The experiment gave the researchers a glimpse into the “black box” of how the intelligence of a supposedly self-taught machine develops. “It’s the first time that we have been able to precisely, and on an identical task, quantify and compare the performance of classical learning algorithms and humans,” adds Fleuret. The scientists were also able to confirm that the number and variety of measures made in the image, upon which learning depends, increased their success rate. “When classifying the image depends on the relative placement of shapes in the image, machine learning has a really hard time,” Fleuret comments. “This justifies the current trend in the field to invent algorithms that are designed to identify individual parts of the image and their relative position.”

The rapidity of the human brain, the fact that it can instantly “reconstruct” an entire object even when part of it is hidden, its ability to find connections between parameters that are extremely variable while taking into account the temporal dimension (clothing and gait, for example, instead of a face for recognizing a person) all give it a huge advantage over machines in the area of image analysis. At their own pace, however, electronic devices will continue to benefit from improving techniques and processor speeds to get even better at decoding the world.

More information: Comparing machines and humans on a visual categorization test, Published online before print October 17, 2011, doi:10.1073/pnas.1109168108

Provided by Ecole Polytechnique Federale de Lausanne