February 9, 2007 feature

Can expert reasoning be taught?

In addition to mastering a large body of knowledge, successful researchers must acquire a host of high-level cognitive skills: critical thinking, "framing" a problem, ongoing evaluation of the solution as it progresses, and ruthless validation of one's final answer. Some students pick these skills up on their own as they advance towards their degree, especially those who participate in research, but they rarely appear in a curriculum.

Professor Craig Ogilvie of Iowa State University has developed a problem-solving environment that not only encourages students to practice these skills but also monitors their progress.

As a physics professor, I often find myself torn between competing educational goals. On the one hand, most courses have a laundry list of fundamental theories and techniques that must be taught if the students are to advance further in the subject. On the other hand, there are a number of higher cognitive skills that I would also like to emphasize. The cognitive skills are more useful in life, but how much subject matter can I reasonably sacrifice to make room for teaching them?

Traditional teaching methods reinforce the course content by assigning busy work — practice makes perfect, after all. Homework assignments consist of simple "plug and chug" problems that students can solve easily by finding the appropriate formula. While such assignments do help students learn the main topics of a course and prepare for the inevitable final exam, they promote a very limited style of problem-solving. More importantly, they provide little motivation for students to absorb the lessons of scientific thought.

One solution is to raise the bar on the problems. Why not strip them of their hand-holding language, and present information in a more realistic setting?

The result may be too difficult for one student, but is probably suitable for a group of students. Researchers in physics education at the University of Minnesota, for example, have created an archive of such context-rich problems for their introductory physics courses1. Context-rich problems force students to practice some of the cognitive skills used by experts, in particular the skill of analyzing a problem qualitatively before looking for the proper formula.

I don’t wish to bore my readers, so I will present the briefest possible example of this qualitative analysis. When confronted with a collision problem, students should ask themselves whether friction is an important factor before they look up Newton's laws. Figuring out how a problem relates to known principles is the first step taken by experts when they approach a new situation.

"Students who are used to working on well-structured problems struggle when confronted with the multiple challenges of more complex tasks." writes Professor Ogilvie (Iowa State University), who not only assigns context-rich problems in his course but has also begun researching their effect on the problem-solving strategies adopted by students. The problems are implemented in a computer-assisted learning environment of his own design.

When a student group logs into the system, a context-rich problem is presented with a selection of information resources that may or may not be relevant. The students are asked to write not just the solution, but describe their thought process in categories corresponding to the typical stages of expert reasoning: qualitative analysis, identification of relevant concepts, ongoing monitoring (evaluation of the solution as it progresses), and validation of the solution obtained. The beauty of this system is that it not only guides the student into a more 'expert' mode, it also tracks the time spent at each step, the resources accessed, and so on.

So, has this learning environment helped train a new generation of experts?

The answer is yes, with some caveats. First, as with any group project it is hard to track which students are working and which ones are learning. Second, Ogilvie's course presents only five such problems to the students. (There is still all the core material to get through, after all!)

As for the results, there is some good news and some bad news.

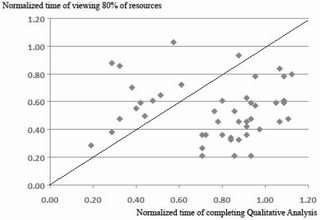

In the first problem of the course, students completed the 'qualitative analysis' section an average of ten minutes before completing the assignment. In the last problem, this section was completed about twenty minutes beforehand. Another encouraging point is that very few students wasted time reading all the available information in later problems. "Taken together, the student groups show some progression towards expert-like behavior" Ogilvie writes, "earlier qualitative analysis and more selective requests for information." By the end of the course, students were also identifying the most relevant concepts earlier.

In other words, the students grew more likely to think about the problem before attacking it. That's the good news!

The bad news is that there is no evidence for improvement in one of the most important aspects of expert reasoning: ongoing monitoring. Monitoring is a form of critical thinking, the general (and highly useful) cognitive skill of evaluating the quality of information. Experts in a field will examine their solutions repeatedly as they work them out. Have any of their initial assumptions been violated along the way? Is the solution making progress, or is it getting sidetracked? Is the math becoming simpler or more complicated? If an expert senses that a solution isn't working well, they may abandon it to look for a better approach.

In all fairness, this is also probably the most difficult skill to measure. Computer tracking can only show that students usually filled in the "monitoring" summary right before completing the assignment.

No doubt this skill can be taught as well, but it might lie beyond the scope of computer instruction. I still vividly remember one of my professors taking only ten minutes to solve a physics problem that had taken me hours to work out. Perhaps the lesson of monitoring solutions can only be learned by sincerely regretting the time you just wasted.

Note: 1groups.physics.umn.edu/physed/ … rch/CRP/crintro.html

Citation: “Understanding Student Pathways in Context-rich Problems” by Pavlo Antonenko, John Jackman, Piyamart Kumsaikaew, Rahul Marathe, Dale Niederhauser, Craig Ogilvie, and Sarah Ryan is available online at xxx.arxiv.org/abs/physics/0701284

By Ben Mathiesen, Copyright 2007 PhysOrg.com.

All rights reserved. This material may not be published, broadcast, rewritten or redistributed in whole or part without the express written permission of PhysOrg.com.