Self-driving car will get smarter

(PhysOrg.com) -- Although Cornell's self-driving car didn't win the DARPA Urban Challenge in 2007, it is alive and well and soon to become safer and more talented -- it will soon be a test bed for new research in robotics and artificial intelligence (AI).

"We'll be looking at the next level of intelligence in the vehicle," said Mark Campbell, associate professor of mechanical and aerospace engineering and the lead researcher for a new $1,473,121, three-year grant from the National Science Foundation under NSF's Cyber-Physical Systems program using federal stimulus funding from the American Recovery and Reinvestment Act (ARRA). As of Oct. 1 Cornell has received 109 ARRA grants totaling more than $93 million.

"In the near term there will be elements of the research that can be integrated into safety systems in human-operated vehicles," Campbell added, noting that while robots are not as intelligent as humans, they can react far more swiftly. Such systems could warn of collisions or take evasive action, recognize if a driver is impaired or asleep, and assist handicapped drivers.

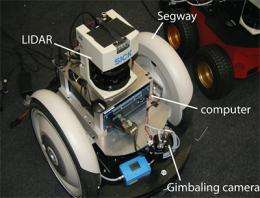

The researchers also will work with self-driving Segway transporters, which will be programmed to work together as teams for mapping and search-and-rescue-applications.

Hadas Kress-Gazit, assistant professor of mechanical and aerospace engineering, and Daniel Huttenlocher, the John P. and Rilla Neafsey Professor of Computing, Information Science and Business and dean of computing and information science, are co-principal investigators for the project.

Campbell and Huttenlocher were advisers to the team of Cornell students who built Skynet, Cornell's entry in the DARPA (Defense Advanced Research Projects Agency) Urban Challenge. The car, a converted Chevy Tahoe named for the AI in the Terminator movies, was one of six of 11 finalists (and 35 semifinalists) to successfully complete the DARPA race by driving autonomously over about 55 miles of city streets, obeying traffic laws and merging with and passing other robotic cars as well as cars driven by humans. DARPA hopes to develop self-driving vehicles to replace human drivers in hazardous environments such as war zones. Civilian applications are in mining, farming, disaster response and remote exploration.

But autonomous vehicles still aren't smart enough, Campbell said. The DARPA challenge was run on a closed, controlled course. "They didn't have pedestrians or people on bicycles," Campbell pointed out. "Our car could not drive autonomously through Collegetown right now."

The present car uses sensor data from radar, lidar and video cameras to build a "model" of its environment -- something human drivers also do, Campbell pointed out -- but the model is still quite simple. It's designed to avoid collisions, but it still can make mistakes, such as failing to distinguish between another car and a cement barrier -- a mistake Skynet made in the DARPA challenge. To intelligently drive through a place like Collegetown, the environmental model must be created in real time, Campbell said, and distinguish between small cars and big trucks, not to mention pedestrians and even pedestrians towing rolling suitcases.

Then the car must anticipate changes and plan accordingly -- in other words, it has to practice defensive driving. This raises issues of trust, Campbell said. "A human driver trusts that the oncoming car will stay in its lane, that others are going to stop at an intersection -- but if the other car swerves or doesn't stop, a human driver can and usually does adapt," he explained.

The level of trust you assign to others can never be 100 percent; the car must make driving decisions based on this "probabilistic" information. "If you try to anticipate all the possibilities before you decide on an action, you'll never move," Campbell said. Ultimately, he said, the car must drive safely and intelligently even in the presence of uncertainty.

Provided by Cornell University (news : web)