Earthquake simulation tops one quadrillion flops

A team of computer scientists, mathematicians and geophysicists at Technische Universitaet Muenchen (TUM) and Ludwig-Maximillians Universitaet Muenchen (LMU) have – with the support of the Leibniz Supercomputing Center of the Bavarian Academy of Sciences and Humanities (LRZ) – optimized the SeisSol earthquake simulation software on the SuperMUC high performance computer at the LRZ to push its performance beyond the "magical" one petaflop/s mark – one quadrillion floating point operations per second.

Geophysicists use the SeisSol earthquake simulation software to investigate rupture processes and seismic waves beneath the Earth's surface. Their goal is to simulate earthquakes as accurately as possible to be better prepared for future events and to better understand the fundamental underlying mechanisms. However, the calculations involved in this kind of simulation are so complex that they push even super computers to their limits.

In a collaborative effort, the workgroups led by Dr. Christian Pelties at the Department of Geo and Environmental Sciences at LMU and Professor Michael Bader at the Department of Informatics at TUM have optimized the SeisSol program for the parallel architecture of the Garching supercomputer "SuperMUC", thereby speeding up calculations by a factor of five.

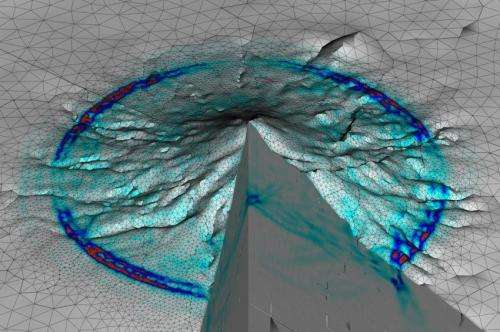

Using a virtual experiment they achieved a new record on the SuperMUC: To simulate the vibrations inside the geometrically complex Merapi volcano on the island of Java, the supercomputer executed 1.09 quadrillion floating point operations per second. SeisSol maintained this unusually high performance level throughout the entire three hour simulation run using all of SuperMUC's 147,456 processor cores.

Complete parallelization

This was possible only following the extensive optimization and the complete parallelization of the 70,000 lines of SeisSol code, allowing a peak performance of up to 1.42 petaflops. This corresponds to 44.5 percent of Super MUC's theoretically available capacity, making SeisSol one of the most efficient simulation programs of its kind worldwide.

"Thanks to the extreme performance now achievable, we can run five times as many models or models that are five times as large to achieve significantly more accurate results. Our simulations are thus inching ever closer to reality," says the geophysicist Dr. Christian Pelties. "This will allow us to better understand many fundamental mechanisms of earthquakes and hopefully be better prepared for future events."

The next steps are earthquake simulations that include rupture processes on the meter scale as well as the resultant destructive seismic waves that propagate across hundreds of kilometers. The results will improve the understanding of earthquakes and allow a better assessment of potential future events.

"Speeding up the simulation software by a factor of five is not only an important step for geophysical research," says Professor Michael Bader of the Department of Informatics at TUM. "We are, at the same time, preparing the applied methodologies and software packages for the next generation of supercomputers that will routinely host the respective simulations for diverse geoscience applications."

More information: Besides Michael Bader and Christian Pelties also Alexander Breuer, Dr. Alexander Heinecke and Sebastian Rettenberger (TUM) as well as Dr. Alice Agnes Gabriel and Stefan Wenk (LMU) worked on the project. In June the results will be presented at the International Supercomputing Conference in Leipzig (ISC'14, Leipzig, 22-June 26, 2014; title: Sustained Petascale Performance of Seismic Simulation with SeisSol on SuperMUC)

Provided by Technical University Munich