September 24, 2013 report

Robot knows who wants one for the road

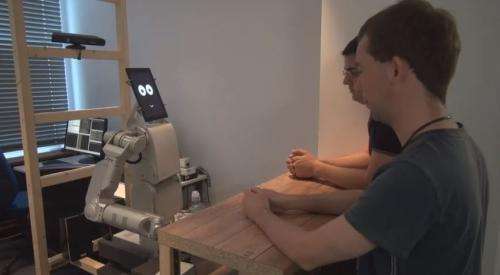

(Phys.org) —JAMES has a head that is actually a tablet. JAMES is an efficient waiter yet only has one arm. JAMES can read your body language to know you want a drink without your saying a word. Perhaps the biggest surprise of all, however, is that the EU is funding this project. JAMES stands for The Joint Action in Multimodal Embodied Systems. The project started in 2011 and continues until January. Actually, its research and results will be helpful for scientists to know how robots can assist people in meaningful ways. Beyond rescue missions, intelligence-gathering and warfare, robots may be useful partners in daily life. JAMES offers interesting cues as to what can be accomplished in robot-human interactions.

The stated goal of the project is to develop an artificial embodied agent that "supports socially appropriate, multi-party, multimodal interaction." Its researchers want to enhance cognitive capabilities so that the robot can interact with humans in a way that is socially appropriate. A bartending scenario can truly put such capabilities to the test.

Professor Dr. Jan de Ruiter of the Psycholinguistics Research Group at Germany's Bielefeld University along with a partner team in Crete, Munich, and Edinburgh set out to solve the problem of how to use robots as bartenders. A discussion of the work was published August 30 in Frontiers in Psychology, in a paper titled, "Automatic detection of service initiation signals used in bars," by Sebastian Loth, Kerstin Huth and Jan P. De Ruiter.

While attempts have been made to design robots as efficient drink-mixers, the thornier challenge is to design robots that can respond to drink orders. One limited way would be to design a robot capable of responding to orders from a human entering an order via touchscreen or smartphone, but the JAMES team has envisioned a robot able to recognize the intention of the bystander, whether wanting or not wanting to order, and respond accordingly.

One might easily assume that the way most people let the bartender know they want a drink in a crowded, noisy bar is to shout and wave their hand.

The researchers found out, however, that it was more important for the robot to understand nonverbal cues and another type of body language. The team took a video camera to establishments in Germany and in Scotland, and recorded people ordering drinks at the bar.

Only 1 in 25 waved. Most people were nonverbal in their drink ordering behavior, standing perpendicular to the bar and looking at the bartender. The videos revealed that they turned away from the bar or spoke with a person next to them if they did not want to order.

The robot's hardware is maintained and programmed by Fortiss GmbH in Munich and findings from the surveillance footage were a factor in programming JAMES. The robot responds only if certain the person wants to order a drink.

A variety of software components have been developed by JAMES project partners and used on the JAMES robot. Many of these components will be released at the end of the project.

The project page lists components that include Robotics Library, an open source C++ library for robot kinematics, motion planning and control; Planning with Knowledge and Sensing (PKS), a C++ library for symbolic planning, used on JAMES for high-level social, dialogue, and physical action selection. Third-party software that has been involved includes Kinect for Windows, used on JAMES for automated speech recognition; Open CV, an open source computer vision and machine learning software library, used on JAMES for computer vision, and several others.

More information: homepages.inf.ed.ac.uk/rpetrick/projects/james/

Journal information: Frontiers in Psychology

© 2013 Phys.org