Researcher locates 'virtual eyes' to enhance 3-D experience

3D movies are a popular trend this year, with countless films opting to include features that make viewers feel as though they are a part of the action. But what if 3D technologies in movies were not just a feature, but an entire, encapsulating experience?

The key may lie in a user-tailored system, seen through a pair of ultra-advanced 3D glasses.

Focused on making virtual reality more intuitive and realistic, Kevin Ponto, Living Environments Laboratory researcher and assistant professor in the School of Human Ecology, has developed a way to personalize the viewing parameters for people experiencing 3D simulations.

In the case of military training or surgery, Ponto's idea of personalized technology may make it easier for a person to become completely absorbed in an alternate reality—an idea that could lead to major enhancements in the field.

"As far as the medical field goes, practitioners talk about 'warming up,'" Ponto says. "Athletes don't just go out there and run on the court and start playing—they warm up, they stretch. They've shown that surgeons, if they actually warm up, do much better jobs."

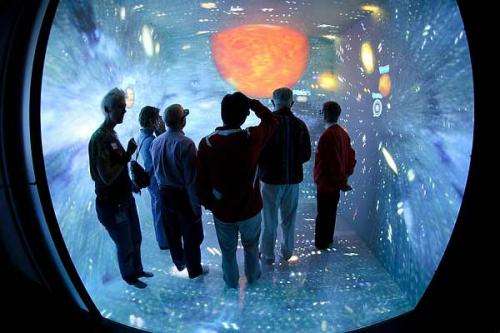

Most 3D projections and glasses are calibrated in a one-size-fits-all fashion similar to what you find in movie theaters. But in a recent pilot study in the LEL's six-sided immersive CAVE, Ponto found that people's "virtual eyes" were positioned in different areas of the head than where their actual eyes and glasses were located.

Here's how it works: 3D images are created by the overlap of two different projections. The projectors' placement of the images on the wall and the distance between a person's eyes both affect the depth, clarity and overall perception of the image. The system itself "tracks" the movement of the user – ensuring that the projections appear on the wall in accordance with the person's location. Since each person has a different height, eye distance and even perception, Ponto says individual calibrations may be necessary to produce the most authentic 3D imaging.

In the study, Ponto changed the placement of virtual reality projections and located participants' "virtual eyes"—the calibration that allowed them to best perform a task with real and virtual objects in the CAVE. Ponto set up a triangle of points in virtual reality space, one each to represent a person's eyes and another ahead as a point of focus in the CAVE. Ponto says the small study's results suggest that some participants' virtual eyes are wider and deeper in the head than the positioning of their real eyes.

If replicated, the findings may prove useful for industries that depend on design to provide a detailed view of products, models, buildings and machinery though 3D images. For instance, more accurate virtual reality can help ensure that a design is flawless before it goes to manufacturing, which saves companies time and money.

Ponto's research received nomination for best paper at the Institute of Electrical and Electronics Engineers Virtual Reality Conference in March 2013. In the future, he hopes to recreate these results with different virtual reality technologies, such as a head-mounted display (HMD). As opposed to the CAVE, which projects images on a wall, HMD consists of a small device that is worn on the head with an optic screen in front of each eye.

If replicated across different technologies, he says the research could confirm that each person's brain may perceive virtual reality differently, making the case for custom-calibrated experiences rather than one-size-fits-all approaches.

Provided by University of Wisconsin-Madison