Model will unlock mysteries of the voice

Swedish researchers are leading the development of the world's first comprehensive model of the human voice, which could contribute to better voice care, voice prosthetics, talking robots and teaching opportunities.

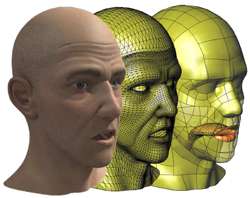

Three research groups from Stockholm's KTH Royal Institute of Technology are collaborating with voice and computing experts at universities and research institutes in France, Germany and Spain on the EUR 3 million Eunison project, which involves physical models, and simulated visualizations of the voice.

KTH music acoustics professor Sten Ternström says that project will render the 3-D physics of the voice, including its acoustic output, which would find profound applications in fields such as speech technology, medical research, pedagogy, linguistics and the arts.

"We need a better understanding of how the voice works and how it fails," Ternström says.

The university's "Lindgren" supercomputer will provide the colossal computing resources for the project. "We are talking about fast movements of tens of thousands of points in three dimensions, so there will be many calculations requiring heavy computation," Ternström says.

Though Eunison will rely on supercomputers to create simulations and visualisation, the project will also use mechanical models of the vocal chords, upper respiratory tract and tongue, made from silicone and plastic, to verify that the simulations are correctly calculated.

"The voice is a very complex phenomenon that requires a lot of work to emulate and understand," Ternström says. "So, we are also interested in how much the model can be simplified, without affecting the voice sounds."

Unlike previous research on the voice, Eunison will be an end-to-end look at the voice, combining findings from multiple disciplines. Previous efforts have been fragmentary, and the models that do exist – of parts such as the vocal chords or vocal tract – are simplified in order to avoid the heavy physics calculations, he says.

"Our complete model of the human voice will resemble a puppet," Ternström says. "The scientist pulls on one or more strings, and then we can see what happens."

The voice model will be made operable online, so that researchers anywhere can enter data and get a visualisation.

Provided by KTH Royal Institute of Technology