October 15, 2012 weblog

Magic Finger device suggests new day for calling up content (w/ Video)

(Phys.org)—You can swipe and tap away for content on mobile device screens. What if you didn't have to touch the screen? A group from University of Alberta, Toronto, and Autodesk Research presented their answer at the ACM Symposium that took place from Oct. 7-12 in Cambridge, Mass. Their Magic Finger is a thimble-like device worn on the user's finger and allows the user's gestures to control smart devices like phones or tablets. "If I am walking down the street and the cellphone is in my pocket, I can make a swiping gesture and execute a function like making a call," Tovi Grossman, a scientist at Autodesk Research, told Discovery. Magic Finger is placed on the fingertip using an adjustable Velcro ring. As a demo video shows, commands can be placed on a variety of surfaces for content to be displayed on the screen.

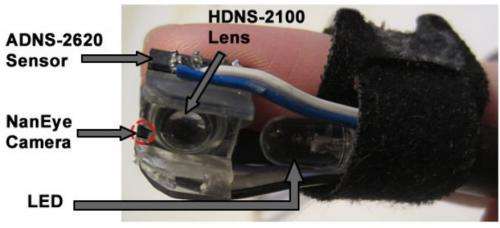

A small plastic casing holds the components. There is a small micro camera and optical flow sensor attached to the finger. Magic Finger senses touch through the optical sensor, enabling any surface to act as a touch screen.

For the optical flow sensor, an ADNS 2620 optical flow sensor with a modified HDNS-2100 lens. Used in optical mice, the sensor is reliable on a variety of surfaces. The sensor detects motion by examining the optical flow of the surface on which it is moving. For an enhanced scope, they used an AWAIBA NanEye micro RGB camera embedded on the edge of the optical flow sensor. Magic Finger senses texture through the micro RGB camera, allowing actions to be carried out based on the surface being touched.

Interestingly, the Magic Finger can differentiate its input to a device according to the surface with which the device is interacting: wood or cloth. "While texture has been previously explored as an output channel for touch input we are unaware of work where texture is used as an input channel," the authors said in their paper, "Magic Finger: Always-Available Input through Finger Instrumentation."

The researchers worked on Magic Finger as a proof-of-concept prototype that enables input through finger instrumentation. And they emphasize that this "instrumentation" is what makes the Magic Finger unique. "We propose finger instrumentation, where we invert the relationship between finger and sensing surface: with Magic Finger, we instrument the user's finger itself, rather than the surface it is touching."

Tests of the device's accuracy are encouraging: Results show that Magic Finger is able to recognize 22 environmental textures or 10 artificial textures with an accuracy of above 99 percent. Overall, their evaluation showed that Magic Finger can recognize 32 different textures with an accuracy of 98.9 percent, allowing for contextual input.

"Accuracy levels indicate that Magic Finger would be able to distinguish a large number of both environmental and artificial textures, and consistently recognize Data Matrix codes," they said.

They also recognized the study's limitations: Their hardware was tethered to a nearby host PC. "This simplified our implementations, but for Magic Finger to become a completely standalone device, power and communication needs to be considered." The interactions that they demonstrated should be treated as "exploratory," they added.

Magic Finger is a research project from Autodesk Research, in collaboration with the University of Toronto. Xing-Dong Yang, Tovi Grossman, Daniel Wigdor and George Fitzmaurice authored the paper presented at the ACM UIST 2012 conference proceedings.

More information: www.autodeskresearch.com/publications/magicfinger

via Discovery

© 2012 Phys.org