July 11, 2012 report

FACE team seeks to melt 'Uncanny' ice for robot bonds (w/ Video)

(Phys.org) -- A robotics team from the University of Pisa in Italy has a challenge for the Uncanny Valley theory made famous by the 1970 essay of that name. Masahiro Mori had said when robots get too realistic they turn people off with a feeling of eerie distaste. The team from Pisa are intent on showing that robots with human expressions can be, well, liked. They would like to generate a new chapter of human like robots that do not churn up a sense of unease. They are focused on research that can demonstrate how manipulated expressions on robots can be made more attractive so that the human can cross over Mori’s dips of feelings of unease and creepiness.

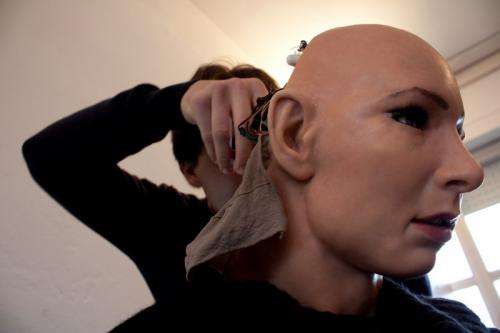

Nicole Lazzeri, a PhD student at the university, and her colleagues have designed a "Hybrid Engine for Facial Expressions Synthesis" (HEFES) - a facial animation engine that gives realistic expressions to a humanoid robot called FACE.

They define HEFES as an engine for generating and controlling facial expressions “both on physical androids and 3D avatars.” HEFES is part of a software library that controls the human-like robot, FACE (Facial Automaton for Conveying Emotions).

HEFES is essentially a mathematical program being used to control FACE's expressions. The algorithm works out which motors need to be moved to create any particular expression or transition between two or more expressions.

As the FACE team notes, “The human face is equipped with a complex physical structure and it has more than 100 muscles situated between skin surface and skull with very different shapes and functionality. They allow us to control even minimal muscular movements and to generate a myriad of different facial expressions.”

The robot’s servo motors actuate the “face” in a different way, however. In contrast to human muscles, the robot servos are only capable of producing linear contractions. Human orbicular muscles, like the Orbicularis oculi and the Orbicularis oris, produce circular contractions. “The movement of this kind of muscles is reproduced using more than one servo motor in the most realistic way as possible.”

In order to go forth to convincingly mimic the range of human expressions, of anger, disgust, fear, happiness, sadness, and surprise that facial muscles support, the team placed 32 motors around FACE's skull and upper torso to manipulate its polymer skin to mimic the way that real muscles do. They also worked to have FACE smoothly transition between one emotion and another.

Their motor movements are based on the Facial Action Coding System (FACS) created over 30 years ago. This is a system that codes facial expressions in terms of muscle movements. Paul Ekman developed FACS, naming the muscle movements as facial action units (AUs). A single AU includes more than one muscle.

The FACE team is an interdisciplinary team of the Interdepartmental Research Center at the university. The team’s overall work focuses on what they call Emotional Human Robot Interaction using human-like robots to embody emotional states. Their system has been tested in human-robot interaction studies aimed to help children with autism to interpret their interlocutors’ mood through an understanding of facial expressions.

Their work was presented last month at the IEEE International Conference on Biomedical Robotics and Biomechatronics (BioRob) in Rome.

More information:

Research paper: www.faceteam.it/wp-content/upl … 2012/06/BIOROB12.pdf

Project: www.faceteam.it/

© 2012 Phys.org