July 4, 2012 report

Smart headlights let drivers see between the raindrops

(Phys.org) -- A Carnegie Mellon professor and his team have developed a prototype headlight system, or “smart headlights” designed to help you make your way safely home if driving through a downpour or snowstorm where visibility is threatened. During low-light conditions, drivers rely mainly on headlights to see the road but the same headlights reduce visibility when light is reflected off of precipitation back to the driver. The prototype smart headlights work in such a way so that lights help, not hinder, the stressed-out driver.

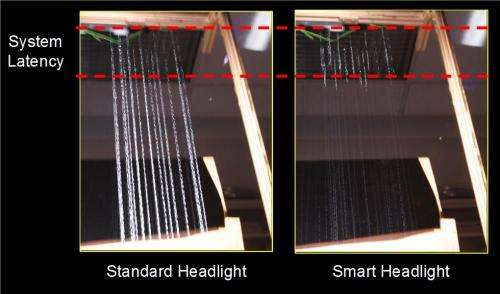

In difficult weather conditions, headlights make raindrops and snowflakes appear as bright flickering streaks. The university team sought to “dis-illuminate” the distracting lights. The headlight beams shine around rather than on the drops. The headlights are in turn enabling the driver to see though the rain and snow and avoid the distressing glare that goes with standard headlights.

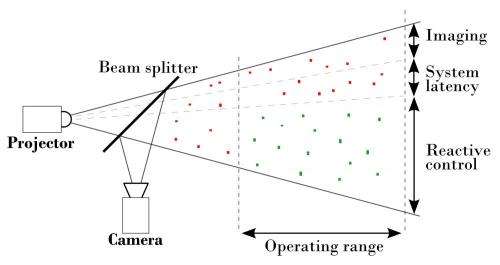

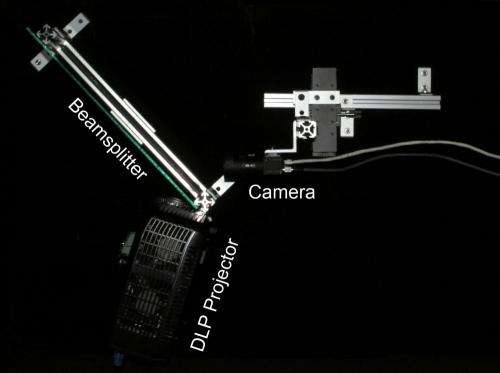

Computer science professor Srinivasa Narasimhan, whose research focuses on computer vision and computer graphics at Carnegie Mellon, wanted to see if he and his team could stream light in between the drops. Their answer consists of a co-located imaging and illumination system-- camera, projector, and beamsplitter. The idea of the design is to integrate an imager and processing unit with a light source. The beamsplitter (50/50) permits optically co-locating the camera and projector to eliminate the need for stereo reconstruction, according to team site comments about the project, reducing computations and increasing system speed.

The camera images the precipitation at the top of the field of view. The processor can tell where the drops are headed and sends a signal to the headlights, which make their adjustments and react to dis-illuminate the particles. The entire action, starting from capture to reaction, takes about 13 ms. (The system runs at 120 Hz. The camera uses a 5 ms exposure time and the system has a total latency of 13 ms.)

One question central to their efforts is how fast will the system need to be, to be actually effective, in a car. “Computer simulations show that a system operating near 1,000 Hz, with a total system latency of 1.5 ms, and exposure time of 1 ms can achieve 96.8% accuracy with 90% light throughput during a heavy rainstorm [25 mm/hr] on a vehicle traveling 30 km/hr. However, 400 Hz with less accuracy will be a significant [>= 70%] improvement for the driver,” according to the researchers.

The system's operating range is about 13 feet in front of the headlights. In the paper, “Fast Reactive Illumination Through Rain and Snow,” the authors Raoul de Charette, Robert Tamburo, Peter Barnum, Anthony Rowe, Takeo Kanade and Srinivasa G. Narasimhan, wrote, “In contrast to recent computer vision methods that digitally remove rain and snow streaks from captured images, we present a system that will directly improve driver visibility by controlling illumination in response to detected precipitation. The motion of precipitation is tracked and only the space around particles is illuminated using fast dynamic control.”

The team used a physics-based simulator to evaluate how their system would perform under a variety of weather conditions. Their simulation results show that it is possible to maintain light throughput well above 90 percent for various precipitation types and intensities. Then they demonstrated a proof-of-concept prototype system operating at 120Hz on laboratory-generated rain, which validated their earlier simulations.

Equipment used in the prototype consists of a camera with gigabit ethernet interface (Point Grey, Flea3), DLP projector (Viewsonic, PJD62531), and desktop computer with Intel architecture (Intel i7 quad core processor).

The team's next steps will involve making the system faster and more compact to test on a moving platform. They said the technology under research for those ends may take three to four more years and “commercializing it as a product will take additional years.”

Because the prototype was built with off-the-shelf components, data transfer speed is slower than if the components were more closely integrated. Developing specialized hardware that more closely integrates the camera and projector with a processing unit will bring improvements. They also said more sophisticated algorithms are needed to maximize system speed and account for factors such as vehicle motion and wind turbulence.

Carnegie Mellon’s Prof. Narasimhan presented the findings this year at Microsoft Research and at Research@Intel 2012.

More information: "Fast Reactive Control for Illumination through Rain and Snow" Raoul de Charette, Robert Tamburo, Peter Barnum, Anthony Rowe, Takeo Kanade and Srinivasa G. Narasimhan, Proceedings of IEEE Conference on Computational Photography (ICCP), 2012.

© 2012 Phys.org