May 28, 2012 report

Company uses Kinect to create a touchscreen out of any surface (w/ Video)

(Phys.org) -- Ubi Interactive has developed a display system that will convert virtually any surface to a touchscreen display using a conventional projector, a Microsoft Kinect device, and proprietary software running on a Windows based computer. The company, which is currently just three guys, Anup Chathoth, David Hajizadeh and Chao Zhang, is one of eleven startups that have been given $20,000 by Microsoft to develop Kinect based applications.

From Microsoft’s perspective, doling out cash to companies who come up with innovative ways to use the Kinect device, is a no-brainer. The more applications that use the device, the more of the devices that will be sold, and the more established it becomes, the more entrenched. For companies such as Ubi Interactive, it’s the culmination of several years of high hopes and a lot of work. Prior to adding the Kinect device to their system, they were finding it hard going. Small companies making hardware devices to sell to the masses don’t have a good track record. Thus, when Microsoft offered the team money to use their device instead, the team jumped at the chance.

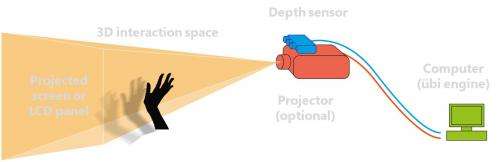

The system appears rather simple at first glance. There is the standard Kinect device and a standard projector that is normally used to flash PowerPoint presentations onto a screen. There’s also a computer of course, running Windows applications. But that’s where this system diverges from the pack. The company has written software that uses input from both the Kinect device and the projector and also from a human being that stands between the projector and the image being displayed. Normally, this would result in images being projected onto the hand and shadows on the screen and that’s it. It’s the same in this case, with one major difference, the hand movements actually control what is going on with the information that is being displayed; in essence, turning walls or whiteboards into a giant touchscreen tablet.

Better yet is if the display device is partially see though, which allows for projecting from behind. In this configuration there is no shadowing and the device really starts to look like an enormous tablet device, except that the operating system is Windows and the apps are any and all those that run on a standard Windows based computer. Their system is also 3D in that it can tell the difference between back and forth hand movements used for swiping and tapping the display to make selections. It can also understand more than one point of interaction, meaning two hands can be used to rotate images on the screen, for example.

Ubi Interactive is looking to sell the software separately (for around $500), or as a whole system if customers wish, and based on where they are now, it appears a product should be on the market soon.

More information: www.ubi-interactive.com/index.php/ubi-technology

© 2012 Phys.Org