December 1, 2011 feature

The future cometh: Science, technology and humanity at Singularity Summit 2011 (Part I)

(PhysOrg.com) -- In its essence, technology can be seen as our perpetually evolving attempt to extend our sensorimotor cortex into physical reality: From the earliest spears and boomerangs augmenting our arms, horses and carts our legs, and fire our environment, we’re now investigating and manipulating the fabric of that reality – including the very components of life itself. Moreover, this progression has not been linear, but instead follows an iterative curve of inflection points demarcating disruptive changes in dominant societal paradigms. Suggested by mathematician Vernor Vinge in his acclaimed science fiction novel True Names (1981) and introduced explicitly in his essay The Coming Technological Singularity (1993), the term was popularized by inventor and futurist Ray Kurzweil in The Singularity is Near (2005). The two even had a Singularity Chat in 2002.

While the Singularity is not to be confused with the astronomical description of an infinitesimal object of infinite density, it can be seen as a technological event horizon at which present models of the future may break down in the not-too-distant future when the accelerating rate of scientific discovery and technological innovation approaches a real-time asymptote. Beyond lies a future (be it utopian or dystopian) in which a key question emerges: Evolving at dramatically slower biological time scales, must Homo sapiens become Homo syntheticus in order to retain our position as the self-acclaimed crown of creation – or will that title be usurped by sentient Artificial Intelligence? The Singularity and all of its implications were recently addressed at Singularity Summit 2011 in New York City.

Vinge opens his groundbreaking 1993 essay with a fundamental definition of the Singularity:

The acceleration of technological progress has been the central feature of this century. We are on the edge of change comparable to the rise of human life on Earth. The precise cause of this change is the imminent creation by technology of entities with greater-than-human intelligence. Science may achieve this breakthrough by several means (and this is another reason for having confidence that the event will occur):

• Computers that are "awake" and superhumanly intelligent may be developed. (To date, there has been much controversy as to whether we can create human equivalence in a machine. But if the answer is "yes," then there is little doubt that more intelligent beings can be constructed shortly thereafter.)

• Large computer networks (and their associated users) may "wake up" as superhumanly intelligent entities.

• Computer/human interfaces may become so intimate that users may reasonably be considered superhumanly intelligent.

• Biological science may provide means to improve natural human intellect.

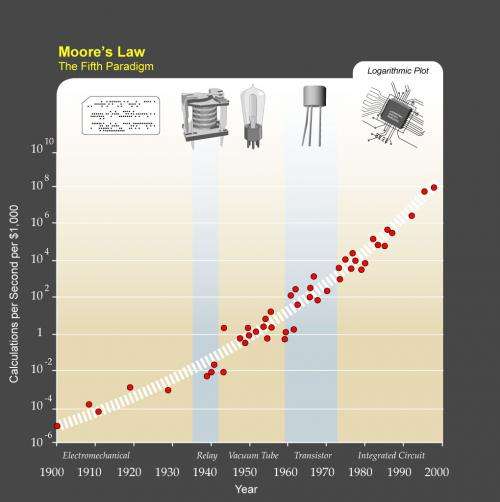

For Kurzweil, the core of the Singularity remains the augmentation and surpassing of human biology through the accelerating evolution of technology (notably genetics, nanotechnology, and Artificial Intelligence) enabled by exponential increases in computational power and speed coupled plummeting costs and size. Not surprisingly, then, in his opening address, From Eliza to Watson to Passing the Turing Test, Kurzweil – ever the low-key evangelist – focused largely on what he described as the remarkable continuing evidentiary support for his original projections. He voiced a clear differentiation between basing our AI offspring using biologically-inspired principles and, alternatively, crafting biomorphic substrates: In short, he favors the former, viewing the latter as unnecessary.

In his post-presentation press conference, Kurzweil was asked about the role of our evolutionarily-determined biological drives – and thereby motivation and emotion – as minds merge with machines and AI equals or surpasses human levels. Kurzweil noted that we have a remarkable ability to sublimate our drives, and we do so largely into innovation. Since he sees the purpose of technology as the transcendence of our biological limitations, one interpretation of his response is that he might well define human-like AI as being motivated by the goal of achieving unfettered, ever-accelerating innovation – and that achieving that goal will generate a positive AE (artificial emotional) state. Whether or not that’s the case, in an Australian Broadcasting Corporation interview earlier this year, Kurzweil has predicted that AI will achieve human-level emotional thought by roughly 2030:

That's really the big frontier right now is for computers, in general, to master human emotions.

Emotion is not some sideshow to human intelligence. It's actually the most complicated intelligent thing we do, being funny, getting the joke, expressing a loving sentiment. That's the cutting edge of human intelligence. If we were to say intelligence is only logical intelligence, computers are already smarter than us.

I believe it's going to be about over the next 20 years where we close that gap in terms of human superiority today in emotional intelligence.

Today computers can understand human emotions in certain situations. Watson, the IBM computer that won Jeopardy, did have to understand some things about human emotion to master the language in that game, but they're not yet at human levels. They're getting there.

True to the Singularity’s premise of accelerating technological innovation, this achievement may be arriving sooner expected: Recently, research conducted by Zoraida Callejas, David Griol and Ramón López-Cózar at the University of Granada and University of Madrid, has demonstrated a method for predicting a person’s mental state in spoken dialog from that individual’s emotional state and intention by means of a module bridging natural language understanding and dialogue management architecture.

MIT wunderkind Alexander Wissner-Gross says that it will be sufficient for a post-Singularity AI to understand human feelings rather than having to develop emotive thought. In his much-anticipated talk, Planetary-Scale Intelligence, he suggested that a global human-level AI could emerge from the mathematical world of quantitative finance and high-frequency trading – and that it may already have.

In The Undivided Mind – Science and Imagination, filmmaker, aesthetic philosopher and ecstatic futurist Jason Silva – the speaker that most energized the crowd – called for passion and artistic sensibility to inform ideation and instantiation of the Singularity. Silva displayed this perspective by showing his short film The Beginning of Infinity, which visually expresses an epistemological epiphany. Silva is also the first guest to be interviewed on Critical Thought TV.

VIDEO: THE BEGINNING OF INFINITY

“What’s fantastic about having a Singularity Summit,” Silva notes, “is that it provides an anchor that gives legitimacy to these ideas – that they’re not just something on the fringe of academia. Our story then spills over into a variety of substrates – books, magazine articles, television, film, websites, and so on. However,” he points out, “I think they need to work on their aesthetic framing” – the essence of his talk – “which I hope provided an injection of art and design. As Rebecca Elson wrote in her book, A Responsibility to Awe, facts are only as interesting as the possibilities they open up to the imagination.” For Silva, one of those possibilities is our achieving the God-like qualities of immortality and omniscience long promised, but never delivered, by religion.

Silva also differs from Kurzweil and Wissner-Gross in his view of emotion in coming human-analogous AIs. For example, he refers to Leonard Shlain’s Art & Physics, which Silva cites as pointing out that art and science are two sides of the same coin. Silva agrees that this appears to be supported by fMRI studies of human brain activity, which seem to show that their common ground lies in the emotional content of experience, as well as by very recent findings that visual encoding in the human ventral temporal (VT) cortex is common across individuals viewing the same movie content.

“Truth,” concludes Silva, ”is a symphony.”

More information: Part II: www.physorg.com/news/2011-12-f … logy-humanity_1.html

Copyright 2011 PhysOrg.com.

All rights reserved. This material may not be published, broadcast, rewritten or redistributed in whole or part without the express written permission of PhysOrg.com.