November 22, 2011 report

Invoked computing: Pizza box is too loud! I can't hear the banana

(PhysOrg.com) -- Mention the buzz word ubiquitous to any technology futurists and they will know what that implies. Hardware as we know it will recede. More people will communicate with words and images embedded in walls, on boxes, as part of room furniture and kitchen appliances. Pretty much the entire physical environment, not a screen, will be the canvas for carrying and sharing information. Some futurists call it computing’s third wave, following the mainframe and then the PC and mobile gadgets.

But here is an interesting recent twist. Researchers at the Ishikawa-Oku lab at the University of Tokyo are eagerly sharing their Invoked Computing concept. It’s about “spatial audio and video” augmented reality that is invoked through miming. At least that is one way to describe the concept. Another way is this.

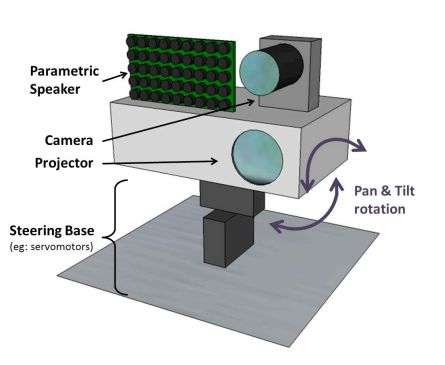

Researchers taking part in the “invoked computing” project are working up a multimodal augmented reality system that can transform everyday objects into communication devices on the spot. To “invoke” an application, the user just needs to mimic a specific scenario.

DigInfo recently released a video of the demos. The presentation shows how the team puts their concept into action. There is a banana scenario where the person takes a banana out of a fruit bowl and brings it closer to his ear. A high speed camera tracks the banana; a parametric speaker array directs the sound in a narrow beam. The person talks into the banana as if it were a conventional phone.

“With an iPhone, for example, you have everything in a small device and you have to learn how to use it,” said project spokesperson, Alexis Zerroug, whose university work in Tokyo is focused on virtual reality and machine human interfaces. “Here we want to do the opposite; the computer will have to learn what you want to do.”

Another concept example that the team presents is “a laptop in a pizza box.” Video and sound are projected onto the lid of the pizza box. The user interacts with what he sees on the pizza box, moving a playhead and changing the volume.

Zerroug, Alvaro Cassinelli, and Masatoshi Ishikawa recently won grand prize at the Laval Virtual 2011 in France for their concept demo. They also had the concept on display at the Digital Contents Expo in Tokyo. At the expo, their entry had the following descriptive notes:”Our multimodal AR system will detect objects and augment them with images and sounds. For example, you can take a banana and use it as a phone handset, or a pizza box as a laptop computer.”

More information: Researchers' website

© 2011 PhysOrg.com